The UALink Consortium officially released the UALink™ 200G 1.0 Specification, establishing an open industry standard for low-latency, high-bandwidth interconnects designed to scale AI accelerator performance in next-generation data center clusters. This specification enables 200G per lane communication between up to 1,024 accelerators within a single AI computing pod, providing the foundational building block for future high-performance AI and HPC architectures.

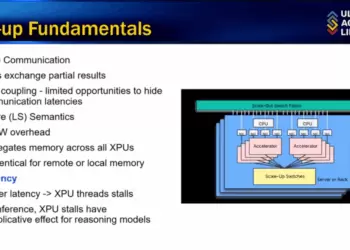

UALink™ is a memory-semantic interconnect optimized for scale-up workloads, delivering deterministic performance with 93% effective peak bandwidth while reducing latency, power, and total cost of ownership. It supports direct accelerator-to-accelerator communication using load/store, atomic, and memory-access operations across multiple system nodes. Unlike traditional interconnects, UALink is designed to minimize complexity and maximize bandwidth utilization using smaller die area and simplified switch design.

Formed in October 2024, the UALink Consortium represents over 85 companies, including founding members Alibaba, AMD, Apple, Astera Labs, AWS, Cisco, Google, HPE, Intel, Meta, Microsoft, and Synopsys. The consortium’s mission is to build an open ecosystem that accelerates AI infrastructure innovation through standardized, interoperable technologies. With the ratification of the 1.0 specification, the UALink Consortium opens the door for vendors to build compatible accelerators, switches, and pods optimized for emerging AI scaling demands.

• Technical Specifications of UALink 200G 1.0:

• 200G per lane interconnect supporting up to 1,024 accelerators per AI pod

• Memory-semantic load/store protocol with support for read, write, and atomic operations

• Achieves 93% effective peak bandwidth

• Offers latency comparable to PCIe with raw speed matching Ethernet

• Design Benefits:

• Low-power architecture through efficient switch design and minimal die area

• Lower total cost of ownership via reduced complexity and improved bandwidth utilization

• Supports multi-node systems with deterministic performance scaling

• Ecosystem and Industry Support:

• Over 85 member companies contributing to the UALink Consortium

• Founding board includes Alibaba, AMD, Apple, AWS, Cisco, Google, HPE, Intel, Meta, Microsoft, Synopsys

• Publicly available specification encourages open innovation and multi-vendor interoperability

“UALink is the only memory semantic solution for scale-up AI optimized for lower power, latency and cost while increasing effective bandwidth,” said Kurtis Bowman, Board Chair of the UALink Consortium.