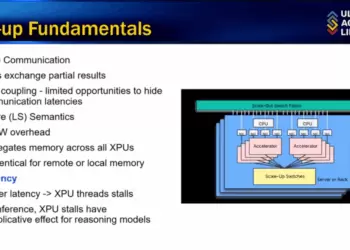

At the IEEE Hot Interconnects online conference, Alan Benjamin, CEO of GigaIO, delivered an online presentation highlighting the company’s SuperNODE architecture as a more efficient alternative to conventional scale-out GPU clusters for AI inference workloads. Built on the company’s FabreX PCIe memory fabric, SuperNODE connects dozens of accelerators into a single node server, eliminating the inter-server overhead and latency challenges of Ethernet- and InfiniBand-based clusters.

The SuperNODE is accelerator- and form-factor-agnostic, supporting GPUs from NVIDIA and AMD, FPGAs, ASICs, and custom inference processors such as d-Matrix and Tenstorrent, across OAM, SXM, and PCIe board formats. With end-to-end latencies of 330 nanoseconds, the system enables higher utilization and lower total cost of ownership. GigaIO also highlighted Gryf, a carry-on-sized portable AI supercomputer, bringing datacenter-class compute to the edge.

Benchmark results presented at the conference showed that SuperNODE achieved 83x faster time-to-first-token, 48% higher token throughput, and 51% more requests per second compared with RoCE Ethernet-based scale-out clusters of the same 32 GPUs, processors, memory, and storage. Token-per-watt improved by 80%, and token-per-dollar improved by 50%.

- Connects dozens of heterogeneous accelerators into a single server

- PCIe-based FabreX fabric with NVLink and Infinity Fabric integration

- 330ns latency, lower than Ethernet and InfiniBand alternatives

- Supports OAM, SXM, PCIe form factors

- Benchmarks: 83x faster token response, 48% more tokens/sec, 51% more requests/sec

- Tokenomics: +80% tokens/watt, +50% tokens/dollar

- Companion product “Gryf” provides portable AI inference in a carry-on form factor

“Our SuperNODE architecture is designed to deliver true scale-up performance for AI inference, with the lowest latency and highest efficiency in the industry,” said Alan Benjamin, CEO of GigaIO.

🌐 Analysis:

Founded in 2012 and based in Carlsbad, California, GigaIO has built its reputation on the FabreX PCIe-based memory fabric, a disaggregated infrastructure solution that allows pooling and dynamic allocation of accelerators, storage, and networking. The company has raised funding from investors including SK Hynix and has targeted HPC, AI, and composable infrastructure markets where accelerator heterogeneity is increasingly critical.

On the technology side, PCIe remains central to GigaIO’s strategy. PCIe Gen4 is widely deployed, with Gen5 beginning to ship in new servers, doubling bandwidth to 64 GT/s per lane. Gen6, expected in 2026, will double that again to 128 GT/s, incorporating PAM4 signaling to sustain performance growth. Each PCIe generation enables larger and more tightly coupled fabrics, a trend that supports GigaIO’s vision of rack-scale single-server architectures. As competitors like NVIDIA push proprietary interconnects (NVLink, NVSwitch) and AMD advances Infinity Fabric, GigaIO is betting on PCIe’s ubiquity and open ecosystem to gain traction among customers seeking flexibility and cost efficiency.

🌐 We’re tracking the latest developments in AI infrastructure and accelerator interconnects. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/