At the IEEE Hot Interconnects conference, Sharada Yeluri of Astera Labs outlined why UALink (Ultra Accelerator Link) is emerging as the open protocol standard for rack-scale AI infrastructure. Designed specifically for connecting XPUs within a rack, UALink promises ultra-low latency, deterministic performance, and bandwidth efficiency far beyond what Ethernet can deliver in this domain.

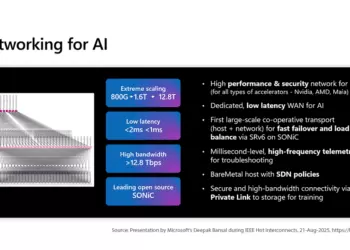

Yeluri emphasized that AI workloads require 12x more bandwidth than scale-out networks because collective communication between XPUs dominates during training and inference. With load/store semantics, memory across all accelerators must appear unified, making low jitter and nanosecond-level latency essential. UALink achieves this with a single-stage, non-blocking fabric that provides lossless flow control, memory consistency, and greater than 95% bandwidth efficiency.

The UALink protocol stack is structured in four layers:

- UPLI (Protocol Layer) – Defines read/write and atomic operations with security built in.

- Transaction Layer – Breaks down commands into 64B flits, adds address compression.

- Data Link Layer – Packs multiple flits efficiently, supports retries and flow control.

- Physical Layer – Built on Ethernet PHY/SerDes, leveraging IEEE P802.3dj for 200 GT/s signaling.

Compared to Ethernet, UALink demonstrates clear advantages for rack-scale AI:

- Efficiency: >95% link utilization versus Ethernet’s variable packet overhead.

- Latency: Fixed 640B flits and simplified switching reduce jitter and response time.

- Power: Switch power is expected to be 20–30% lower.

- Ordering: Native strict and relaxed ordering enables flexibility not easily achieved with Ethernet.

- Reliability: Built-in request/response isolation, hop-by-hop flow control, and FEC.

Yeluri positioned UALink as essential for a multi-vendor accelerator ecosystem, calling on developers to join the consortium and help shape the forthcoming UALink 2.0 standard. “There is a need for an open accelerator interface protocol with security built in natively,” she said. “UALink is the one.”

🌐 Analysis: UALink’s push at HOTI underscores a critical shift in AI infrastructure design. While Ethernet and InfiniBand dominate scale-out networking, rack-scale AI training places unique requirements on latency, determinism, and efficiency. UALink is carving out this space by providing a purpose-built interconnect that avoids Ethernet’s overhead while maintaining compatibility at the PHY level. The initiative, backed by Astera Labs, AMD, Broadcom, Google, Intel, Meta, and Microsoft, positions UALink as the open alternative to proprietary GPU interconnects such as NVIDIA’s NVLink or AMD’s Infinity Fabric. If the consortium delivers on roadmap promises, UALink could become the de facto standard for rack-scale AI fabrics.

🌐 We’re tracking the latest developments in AI infrastructure interconnects. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/