Marvell announced that its Structera Compute Express Link (CXL) memory-expansion controllers and near-memory accelerators have completed interoperability testing with DDR4 and DDR5 memory from Micron, Samsung, and SK hynix. This follows earlier validation with AMD EPYC and 5th Gen Intel Xeon CPUs, making Structera the first CXL 2.0 product line proven across both major processor architectures and all three leading DRAM suppliers.

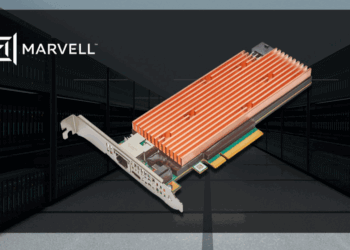

The Structera lineup includes two device families. Structera A integrates 16 Arm Neoverse V2 cores with multiple memory channels to support high-bandwidth applications such as deep learning recommendation models. Structera X enables servers to expand memory capacity by terabytes for in-memory databases and other large-scale workloads. Both device types feature four memory channels, inline LZ4 compression, and are built on 5nm process technology. Marvell also offers Structera as licensable IP for integration into custom silicon, giving hyperscalers the option to embed CXL capabilities directly into their designs.

By validating Structera across CPUs and DRAM vendors, Marvell seeks to reduce integration risks for OEMs and cloud providers while giving customers more flexibility in system design and supply chain planning. The company says the product line supports both standard and customized deployment models.

- Structera validated with DDR4/DDR5 from Micron, Samsung, and SK hynix

- Proven interoperability with AMD EPYC and 5th Gen Intel Xeon CPUs

- Structera A: near-memory accelerator with 16 Arm Neoverse V2 cores for AI/ML

- Structera X: memory expansion controllers enabling terabyte-scale capacity

- Available as discrete silicon or licensable IP for custom SoCs

“As AI and high-performance computing workloads intensify, CXL will help dissolve bottlenecks for demanding workloads that can consume upwards of hundreds of terabytes of memory capacity,” said Praveen Vaidyanathan, vice president and general manager of Cloud Memory Products at Micron.

🌐 Analysis: Compute Express Link (CXL) is an open industry standard designed to create a unified, high-speed interconnect between CPUs, memory, and accelerators. CXL 2.0, the current widely deployed version, adds features such as memory pooling and switching, enabling data centers to share and scale memory more flexibly. The CXL Consortium, backed by Intel, AMD, Arm, and major cloud providers, continues to advance the specification toward 3.0 and 3.1, which bring enhanced switching and fabric capabilities. Industry players such as Astera Labs, Montage Technology, and Rambus are also delivering controllers and switches, while hyperscalers are testing CXL to break through memory bottlenecks in AI and in-memory workloads. Marvell’s ability to demonstrate interoperability across CPUs and DRAM suppliers positions Structera as a frontrunner in an ecosystem that is just beginning to move into large-scale deployment.

🌐 We’re tracking the latest developments in networking silicon. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/