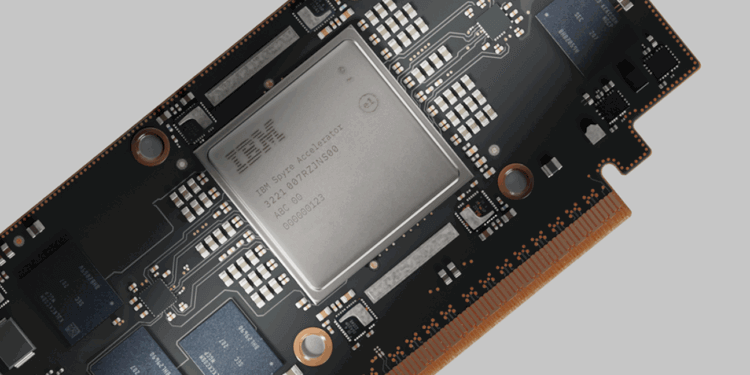

IBM is bringing its in-house AI accelerator to market with the commercial launch of the Spyre Accelerator, designed to deliver low-latency inference for generative and agentic AI workloads. The chip will debut on October 28 for IBM z17 and LinuxONE 5 systems, followed by Power11 servers in December. Built to operate alongside the company’s Telum II processor, Spyre enables enterprises to run AI models directly on mainframes and servers without compromising on security or throughput.

Developed through the IBM Research AI Hardware Center, Spyre integrates 32 accelerator cores and 25.6 billion transistors on a 5nm system-on-chip, packaged in a 75-watt PCIe card. Up to 48 cards can be clustered in IBM Z or LinuxONE systems and 16 cards in Power servers to scale inferencing workloads. IBM said Spyre maintains on-prem data control while improving efficiency and performance for real-time AI applications such as fraud detection, transaction analytics, and retail automation.

On Power systems, Spyre integrates with IBM’s AI services catalog, enabling one-click deployment of enterprise AI workflows. The accelerator also enhances generative AI throughput with on-chip mixed-matrix acceleration (MMA) and can process up to 8 million documents per hour for knowledge-base integration. The product reflects IBM’s strategy to pair its semiconductor innovation pipeline with enterprise infrastructure that supports AI at scale across hybrid cloud environments.

• Commercial availability: Oct. 28 for z17/LinuxONE 5; Dec. for Power11

• Architecture: 32 AI cores, 25.6B transistors, 5nm SoC, 75W PCIe form factor

• Scalability: Up to 48 cards (IBM Z/LinuxONE) or 16 cards (Power)

• Integration: Works with Telum II CPU and MMA accelerators

• Primary workloads: Generative and agentic AI, fraud detection, retail automation

• Origin: Developed by IBM Research AI Hardware Center, Yorktown Heights

“With the Spyre Accelerator, we’re extending the capabilities of our systems to support multi-model AI — including generative and agentic AI,” said Barry Baker, COO of IBM Infrastructure. “This innovation positions clients to scale their AI-enabled mission-critical workloads with uncompromising security, resilience, and efficiency.”

🌐 Analysis: Spyre marks a major milestone in IBM’s push to embed AI acceleration directly into its enterprise systems rather than relying on external GPUs. The approach mirrors broader industry trends toward heterogeneous compute, as seen in NVIDIA’s Grace Hopper and AMD’s MI300 platforms, but emphasizes secure, on-prem inference for regulated industries. IBM’s integration of Spyre with z17 and LinuxONE further strengthens its hybrid cloud portfolio and positions the company as a unique AI infrastructure provider bridging mainframes and AI compute.

🌐 We’re tracking the latest developments in semiconductors. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/