Microsoft used its Ignite keynote in San Francisco to highlight a next-generation AI infrastructure platform anchored by the new Fairwater AI data center campus in Atlanta. The facility connects to Microsoft’s first Fairwater site in Wisconsin and earlier GPU superclusters to form what the company described as the first planet-scale AI superfactory. The design departs from traditional cloud data centers by using a single flat network able to integrate hundreds of thousands of NVIDIA GB200 and GB300 GPUs as a coherent supercomputer while supporting a wider range of training, tuning and synthetic data workloads.

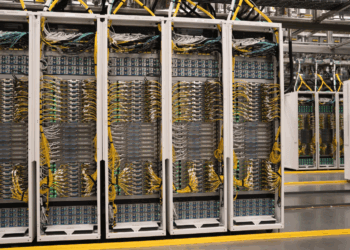

The Fairwater design prioritizes extreme density and low latency, driven by the physical limits of light-speed signal propagation in large clusters. Microsoft introduced a two-story facility layout that shortens cable distances in three dimensions, combined with liquid-cooled racks running at approximately 140 kW per rack and 1.36 MW per row. Each rack houses up to 72 NVIDIA Blackwell GPUs interconnected via NVLink, providing 1.8 TB of GPU-to-GPU bandwidth and more than 14 TB of pooled memory per GPU. Scale-out networking uses a two-tier Ethernet fabric based on SONiC and commodity switching, enabling cluster scaling beyond traditional Clos limits with 800 Gbps GPU-to-GPU connectivity.

The company has also built a dedicated AI WAN backbone to connect Fairwater sites and existing Azure AI supercomputers. Microsoft deployed more than 120,000 new fiber miles across the U.S. last year to extend this optical system, allowing traffic segmentation between scale-up, scale-out and inter-site paths. Power infrastructure has been re-engineered as well: the Atlanta campus targets 4×9 availability at 3×9 cost using highly available grid power instead of dual-corded or generator-heavy designs. Microsoft and its partners also developed software-based workload shaping, GPU-level power-threshold enforcement and on-site energy storage to smooth power oscillations from multi-thousand-GPU training jobs.

Additional Technical Learnings from the Fairwater Architecture

- Single flat network integrates hundreds of thousands of GB200/GB300 GPUs into one coherent system.

- Two-story building design reduces cable lengths and minimizes latency across all GPUs in the cluster.

- Liquid cooling uses an extremely low-impact closed-loop system that reuses water for more than six years after an initial fill equal to ~20 homes’ annual consumption.

- 140 kW-per-rack, 1.36 MW-per-row density increases throughput for large training workloads.

- Up to 72 Blackwell GPUs per rack, each connected via NVLink, delivering 1.8 TB/s GPU-to-GPU bandwidth and over 14 TB pooled memory per GPU.

- Ethernet-based two-tier fabric with 800 Gbps GPU links enables large-scale supercomputer operation using commodity switches and SONiC.

- Packet trimming, packet spray and high-frequency telemetry accelerate congestion control and retransmissions.

- AI WAN optical network extends Fairwater’s architecture across regions, integrating multiple supercomputer generations into a single AI superfactory.

- 120,000 new fiber miles added in the U.S. to expand AI-dedicated backbone reach.

- Power architecture supports 4×9 availability with reduced cost and avoids traditional dual-corded resiliency for GPU racks.

- Energy stability solutions: on-site energy storage, GPU self-throttling thresholds and supplementary workloads to counter power oscillations.

- Fungible workload allocation allows pre-training, fine-tuning, RLHF and synthetic-data workloads to run dynamically across Fairwater sites.

- Fit-for-purpose traffic segmentation maps AI job types to scale-up, scale-out or WAN paths to maximize utilization.

- Integration with Azure services including AKS Automatic and Cosmos DB enables scaling from training to global inference across tens of millions of compute cores.

“Fairwater represents the next leap in Azure AI infrastructure, combining dense compute, sustainable operations and world-class networking systems to meet unprecedented global demand,” said Scott Guthrie.

🌐 Analysis

Microsoft’s Fairwater blueprint marks a shift toward AI-specific data center topologies that blend single-domain supercomputing with multi-site federation over a dedicated WAN. The emphasis on flattened networks, SONiC-based Ethernet fabrics and power-stability techniques underscores rising engineering complexity as GPU clusters reach hundreds of thousands of accelerators, mirroring similar directional moves by hyperscale competitors building multi-gigawatt AI campuses.