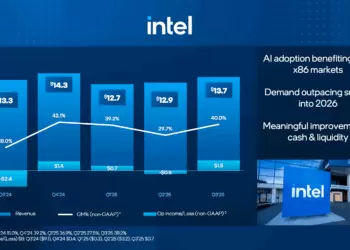

At Hot Chips 2024, Intel presented a series of advancements across AI and compute technologies, including showcasing its integrated optical compute interconnect (OCI) chiplet which is designed to meet the increasing demands of AI data processing, offering high-speed connectivity and efficiency for next-generation computing architectures. Intel also shared new details about its Intel Xeon 6 SoC, Lunar Lake client processors, and the Intel Gaudi 3 AI accelerator, all of which are aimed at enhancing performance across data centers, edge environments, and consumer devices.

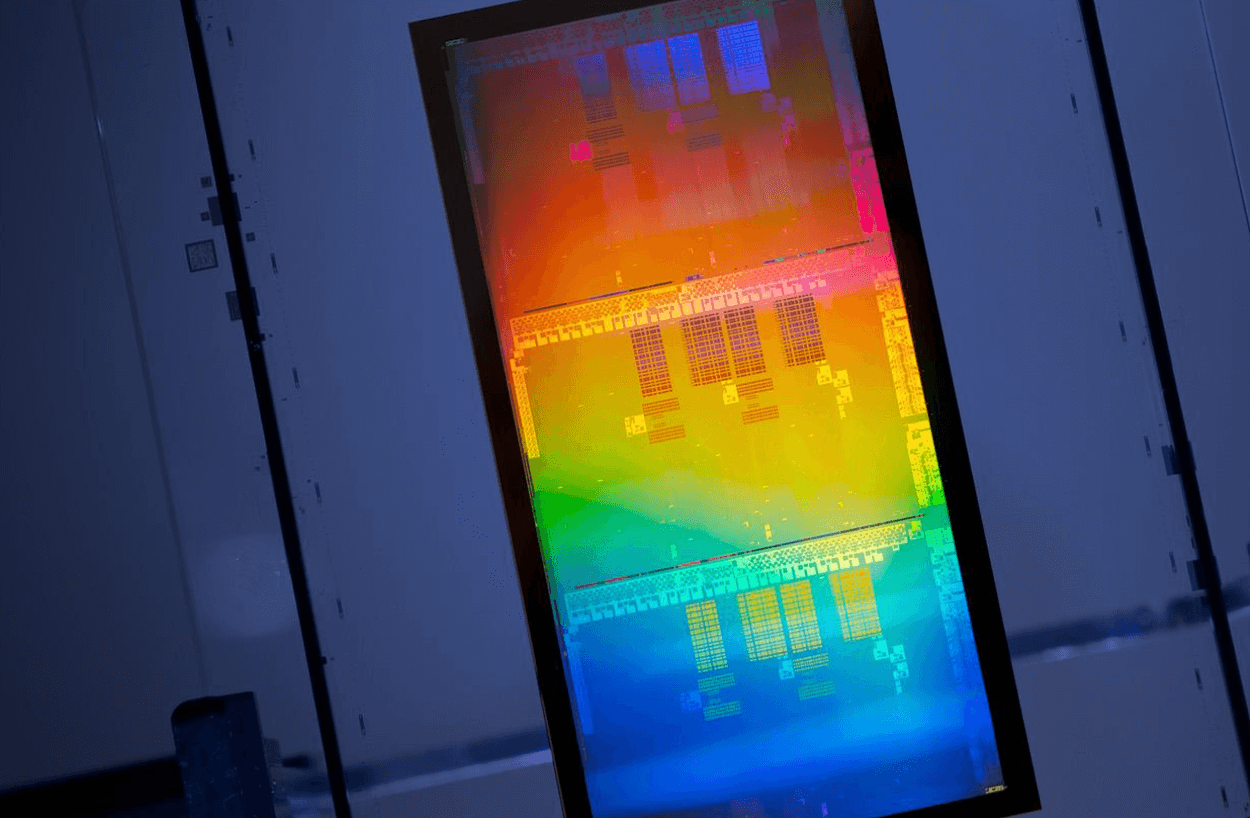

The OCI chiplet, which was demonstrated at OFC earlier this year, represents a significant leap forward in XPU-to-XPU connectivity. Capable of supporting 64 channels of 32 gigabits per second (Gbps) data transmission over distances up to 100 meters of fiber optics, this technology promises higher bandwidth, lower power consumption, and longer reach for AI infrastructure.

In addition to the OCI chiplet, Intel’s new Intel Xeon 6 SoC was highlighted for its edge-optimized design, which addresses challenges such as unreliable network connections and limited power availability. The Lunar Lake processor, focused on the next generation of AI PCs, offers significant improvements in power efficiency and AI performance. Meanwhile, the Intel Gaudi 3 AI accelerator was introduced as a solution for the scalability issues of generative AI workloads, offering optimized compute, memory, and networking architectures.

• Optical Compute Interconnect (OCI) Chiplet:

• Supports 64 channels of 32 Gbps data transmission in each direction.

• Operates over distances up to 100 meters over fiber optics.

• Designed to enhance bandwidth, reduce power consumption, and extend connectivity reach.

• Enables scalability of CPU/GPU clusters and supports novel computing architectures.

• Intel Xeon 6 SoC:

• Up to 32 PCIe 5.0 lanes and 16 CXL 2.0 lanes.

• 2x100G Ethernet and four to eight memory channels.

• Edge-specific enhancements for rugged environments and industrial reliability.

• Integrated AI acceleration for efficient AI workflow management.

• Lunar Lake Processor:

• 40% lower system-on-chip power compared to previous generations.

• Neural processing unit up to 4x faster for generative AI tasks.

• 1.5x improvement in graphics performance with new Xe2 GPU cores.

• Intel Gaudi 3 AI Accelerator:

• Optimized for compute, memory, and networking efficiency.

• Features efficient matrix multiplication engines and two-level cache integration.

• Extensive RoCE networking for cost-effective AI data center operations.

“Intel continuously delivers the platforms, systems, and technologies necessary to redefine what’s possible,” said Pere Monclus, CTO of Intel’s Network and Edge Group. “With proven expertise across the compute continuum, Intel is well-positioned to power the next generation of AI innovation.”