Adtran has introduced a next-generation AI Network Cloud (AINC)-interconnect solution, designed to meet the data transport demands of AI-driven workloads across hyperscalers, government, SLED (state, local and education), and enterprise sectors. The new platform leverages real-time traffic optimization to dynamically adjust optical capacity between data centers and edge locations. Built on Adtran’s FSP 3000 optical transport platform, the AINC solution integrates with Dell’s AI Factory to support scalable, sovereign AI services with enhanced flexibility, performance, and cost efficiency.

The AINC-interconnect is engineered to accommodate fluctuating bandwidth requirements, particularly for agentic AI at the edge and centralized generative AI model training. The solution delivers up to 50x performance acceleration, up to 20% improvement in GPU utilization, and reduces transport costs by up to 50%. It also supports programmable, policy-driven networks through Secured Token Wave Fiber, ensuring trusted and sovereign AI connectivity across both public and private domains.

Adtran’s offering includes Fast-TRX for high-performance core deployments and Fast Ramp-TRX for rapid edge scalability, enabling integration into existing network infrastructures. By moving away from static transport models and embracing dynamic optical networking, Adtran’s AINC solution empowers customers to build high-speed, low-latency networks tailored for real-time AI analytics, predictive modeling, and scalable automation.

- AINC-interconnect dynamically adjusts optical capacity based on AI workload demands

- Integrated with Dell’s AI Factory for scalable, enterprise-grade AI infrastructure

- Up to 50x performance acceleration and 20% GPU efficiency gains

- Up to 50% reduction in transport costs

- Supports sovereign AI networking with Secured Token Wave Fiber

- Includes Fast-TRX and Fast Ramp-TRX upgrade paths for core and edge

“Our AINC-interconnect solution gives customers greater control over their AI service deployment, empowering them to scale without the constraints of legacy transport models,” said Ryan Schmidt, GM of optical transport at Adtran.

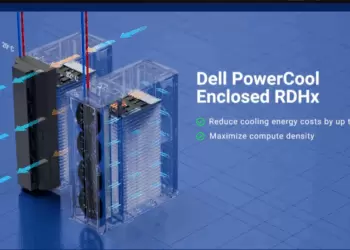

- Dell introduced its AI Factory strategy in May 2024 as part of its broader initiative to enable end-to-end AI infrastructure across enterprise and public sector environments. Designed to simplify the deployment and scaling of AI workloads, the Dell AI Factory integrates compute, storage, networking, software, and services into a unified platform tailored for generative AI and large-scale model training. Key elements include PowerEdge servers optimized for GPU density, PowerScale storage, native support for NVIDIA AI Enterprise software, and orchestration tools for managing distributed AI workloads. The platform is highly modular and supports both cloud and on-premises deployments, enabling customers to maintain data sovereignty and comply with regulatory requirements. Dell has partnered with NVIDIA, Intel, VMware, and Hugging Face, among others, to enhance ecosystem integration and model deployment flexibility. Customers across industries—including financial services, manufacturing, and defense—have adopted Dell’s AI Factory to accelerate AI innovation, with recent deployments including sovereign AI architectures and secure edge AI environments for government and hyperscale clients.