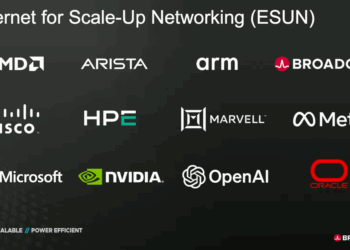

In a keynote at the AI Infrastructure Summit, Broadcom’s Ram Velaga argued that Ethernet is the only viable foundation for AI systems that must scale across racks and data centers. With demand for 70+ million GPUs over the next five years—equivalent to 124 GW of new compute capacity—he said the industry must pivot to accelerators (XPUs) and networking architectures that prioritize bandwidth, efficiency, and reliability.

Velaga outlined three essential requirements for XPU scale-up: high bandwidth, efficient data transfer, and reliable connectivity (Slide 1). Current XPUs already deliver ~40 Tbps of HBM bandwidth, with next-generation designs expected to hit 100 Tbps. Connecting such devices efficiently requires networks that can handle tens of Tbps of I/O per accelerator—two orders of magnitude beyond today’s 100 Gbps CPU I/O.

Ethernet, Velaga stressed, uniquely enables scale-out AI systems because it decouples accelerator design from the transport layer. Using open IEEE 802.3 standards and Ultra Ethernet Consortium enhancements such as LLR, CBFC, and PFC, XPUs can communicate at terabit speeds while leaving room for vendor-specific innovation in memory access, scheduling, and load balancing (Slide 2). This clean separation of concerns ensures that AI clusters—whether confined to a rack, spanning rows of racks, or linking multiple data centers—can operate as a single distributed supercomputer (Slide 3).

Key takeaways from Velaga’s talk:

- AI infrastructure must scale to 124 GW of compute, or ~70M GPUs, in five years

- Next-gen XPUs expected to reach 100 Tbps HBM bandwidth per device

- Networking must leap from 100 Gbps CPU I/O to >10 Tbps XPU I/O

- Ethernet delivers scalability with open IEEE standards and UEC enhancements

- Scale-up (in-rack) and scale-out (across racks/data centers) rely on Ethernet fabrics

- Proprietary interconnects impose vertical lock-in and cannot match ecosystem breadth

“Ethernet will play a very, very important role in what we view as democratization of accelerators,” Velaga concluded, pointing to Broadcom’s roadmap of ultra-high-bandwidth, low-latency switches optimized for AI.

🌐 Analysis: Broadcom is betting that Ethernet’s openness and scale will win against InfiniBand in the race to build AI supercomputers. With Tomahawk6 and Tomahawk Ultra for scale out and scale up and Jericho for inter-DC fabrics, Broadcom is positioning Ethernet as the de facto transport for distributed AI clusters. Hyperscalers’ growing preference for Ethernet-based AI networking suggests Broadcom’s strategy is in step with industry momentum.

🌐 We’re tracking the latest developments in AI infrastructure. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/