NVIDIA’s Ian Buck used his keynote at the AI Infrastructure Summit to lay out the company’s latest architectural advances for scaling inference workloads across hyperscale data centers. Buck, vice president of hyperscale and high-performance computing, described the rising complexity of inference—where responsiveness, throughput, model size, and energy efficiency must all be balanced—and introduced new hardware and system platforms designed to push performance-per-dollar for “AI factories.”

Buck framed the challenge as one of infrastructure design at gigawatt scale, where GPU architectures, rack-level systems, interconnects, and software optimization converge. He pointed to software improvements that have already doubled throughput on Blackwell GPUs since launch, and to the need for specialized processors dedicated to long-context inference, where token counts can run into the millions. “Our data centers are measured not in square footage, but in megawatts,” he said, underscoring the importance of energy efficiency in hyperscale AI deployments.

The presentation spanned NVIDIA’s full-stack approach, from Spectrum-X networking to Blackwell GPUs and the newly announced CPX processors. Buck also emphasized the role of the open ecosystem, including PyTorch, OCP, and MLPerf benchmarks, in driving measurable innovation across both hardware and software layers.

Key announcements and insights from the presentation included:

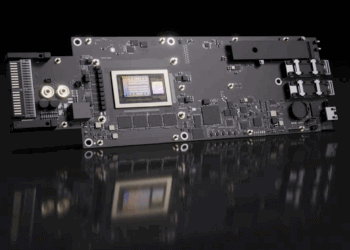

- CPX GPU: A new processor dedicated to long-context inference, with attention acceleration cores, GDDR7 memory, and integrated video encode/decode for multimodal workloads.

- NVL6 Rack Platform: Launching in 2H26, NVL6 delivers 3.6x compute density per rack versus GB200, with 75 TB of memory and 4 PB of storage, while fitting standard mechanical footprints.

- Blackwell GPU Optimization: Software alone has doubled inference throughput on Blackwell since its release, highlighting the performance gains available through compiler and runtime advances.

- Ecosystem Adoption: Partners such as Together AI and Fireworks AI are deploying optimized context-serving pipelines across 8,000+ GPUs for real-world inference aggregation.

- Benchmark Leadership: NVIDIA continues to lead in MLPerf inference results, setting performance records across each GPU generation.

- Reference Architecture: A new AI Data Center Reference Design initiative, developed with Schneider Electric, Jacobs, and others, aims to standardize power, cooling, and mechanical systems for multi-gigawatt AI campuses.

“Our contribution is to deliver higher performance per dollar,” Buck told the audience. “When you do that, you create AI factories that are not only technically possible, but economically viable.”

🌐 Analysis: NVIDIA’s keynote demonstrates how inference—not just training—is now shaping the economics of hyperscale AI. The introduction of CPX as a dedicated context processor addresses the bottleneck of million-token workloads in code-generation and multimodal AI. With NVL6 racks and reference data center designs, NVIDIA is moving beyond GPUs to influence how future AI campuses are engineered end-to-end. This approach places it ahead of AMD and Intel, who remain focused on training performance, and signals a new competitive phase centered on inference scalability and efficiency.

🌐 We’re tracking the latest developments in AI infrastructure, data center design, and semiconductor roadmaps. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/