Alchip Technologies and Ayar Labs introduced a co-packaged optics (CPO) solution aimed at breaking copper interconnect bottlenecks in large-scale AI datacenter deployments. The announcement came at the 2025 TSMC North America Open Innovation Platform (OIP) Ecosystem Forum, where the companies demonstrated how optical I/O can extend scale-up network connectivity beyond rack boundaries while improving efficiency.

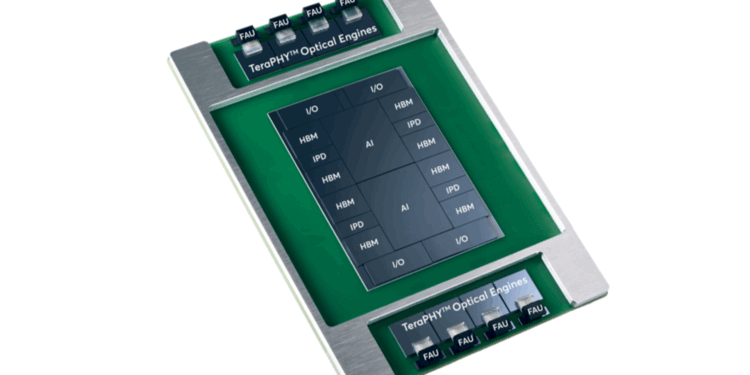

The joint solution integrates Ayar Labs’ TeraPHY optical engines with Alchip’s ASIC and advanced packaging expertise on a common substrate. The architecture combines two full-reticle AI accelerators, four protocol converter chiplets, eight high-bandwidth memory (HBM) stacks, and eight TeraPHY engines. By bringing optics directly into the package, the design enables high bandwidth, low latency, and low energy interconnects while maintaining signal integrity and thermal performance at the package level.

The platform also incorporates integrated passive devices and customized capacitors to improve package response and power efficiency. Protocol converter chiplets bridge between system-on-chip interconnects (UCIe-A) and streaming interconnects (UCIe-S), while supporting multiple scale-up standards such as UALink, PCIe, SUE, and Ethernet. This flexibility positions the system as a composable fabric for XPU-to-XPU, XPU-to-switch, and switch-to-switch links—capabilities needed to scale AI workloads across racks.

By moving optical connectivity closer to the compute die, Alchip and Ayar Labs enable more than 100 Tbps of bandwidth per accelerator and support for over 256 optical scale-up ports per device. This capability allows thousands of AI accelerators to act as a single logical system across multiple racks. Compared to pluggable optics, the co-packaged approach reduces latency, increases radix, and delivers energy efficiency while addressing the growing size and complexity of AI models.

Alchip and Ayar Labs are now working with select customers on integrating the co-packaged optics solution into next-generation AI accelerators and switches. Additional collateral, reference architectures, and design options will be shared with qualified design teams.

- Joint innovation targets multi-rack AI clusters with optical scale-up networking

- Solution supports >100 Tbps per accelerator and >256 optical ports per device

- Built on Ayar Labs TeraPHY optical engines and Alchip advanced packaging

- Supports UCIe standard and flexible chiplet integration

- Customers include hyperscalers and enterprise AI design teams

“AI has reached an inflection point where traditional interconnects are limiting performance, power, and scalability,” said Vladimir Stojanovic, CTO and co-founder of Ayar Labs. “Our collaboration with Alchip enables a CPO solution for AI scale-up, pioneering new, energy-efficient system architectures that will power innovations for hyperscalers and enterprise AI customers, and accelerate AI proliferation, globally.”

🌐 Analysis: By introducing a package-level design that combines accelerators, HBM, chiplets, and optical I/O, Alchip and Ayar Labs are pushing CPO closer to deployment in production AI systems. The architecture supports composability and interoperability via UCIe standards, addressing the practical needs of hyperscalers who must manage latency, radix, and energy at scale.

🌐 We’re tracking the latest developments in networking silicon. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/

🌐 We’re launching the “Data Center Networking for AI” series on NextGenInfra.io and inviting companies building real solutions—silicon, optics, fabrics, switches, software, orchestration—to share their views on video and in our expert report. To get involved, send a note to jcarroll@convergedigest.com or info@nextgeninfra.io.