AMD laid out an ambitious plan to lead the $1 trillion compute market during its Financial Analyst Day in New York, projecting more than 35% compound annual revenue growth and a non-GAAP EPS above $20 within five years. CEO Dr. Lisa Su said AMD is “entering a new era of growth fueled by leadership technology roadmaps and accelerating AI momentum,” pointing to rapid traction across data center, AI, and embedded markets.

The company framed its long-term growth strategy around four pillars: data center leadership, accelerated AI performance, open software, and expanded participation in the embedded and semi-custom silicon markets. AMD expects its data center business alone to surpass $100 billion in annual revenue, growing more than 60% CAGR, with AI revenue rising over 80% CAGR. Su said this next phase will be powered by AMD’s unified compute platform combining EPYC CPUs, Instinct GPUs, Pensando networking, and ROCm software.

AI and Data Center Platform Expansion

AMD’s latest roadmap positions it to compete head-to-head with NVIDIA and Intel across hyperscale and enterprise markets. The Instinct MI350 Series GPU, already deployed at scale by Oracle Cloud, is AMD’s fastest-ramping accelerator to date. Next up is the MI450 Series, launching with the Helios rack-scale platform in Q3 2026. Helios will deliver 3.6 TB/s bandwidth per GPU and up to 72 GPUs per rack with 260 TB/s aggregate throughput, interconnected via UALink and Ultra Ethernet (UEC) fabrics. The system supports shared memory pods across GPUs, enabling large-scale model training with seamless memory access and six-plane fault-tolerant networking.

Helios Explained: AMD describes Helios as the company’s first rack-scale AI platform—a fully integrated, open-architecture system that unifies compute, acceleration, networking, and software into a cohesive design. Unlike traditional server-based clusters, Helios treats an entire rack as a single high-performance computing domain. Each rack combines AMD EPYC CPUs, Instinct GPUs, and Pensando NICs, connected via 5th Gen Infinity Fabric and UALink, to operate as one coherent system. This architecture minimizes data movement overhead, boosts inter-GPU bandwidth, and delivers exascale-class efficiency in a compact footprint. Helios effectively represents AMD’s blueprint for the AI “factory” of the future—an approach designed for modular expansion, allowing hundreds of racks to link together seamlessly across data centers.

In 2027, AMD plans to launch the MI500 Series GPUs and EPYC “Verano” CPUs, extending its annual cadence of CPU-GPU-network co-design. The company said its “Venice” EPYC CPUs, due in 2026, will offer industry-leading density, power efficiency, and AI-optimized instruction sets for inference and general compute workloads. Combined, these systems will underpin the so-called AI factory of the future, capable of scaling from a single rack to globally distributed clusters.

Networking as a Growth Engine

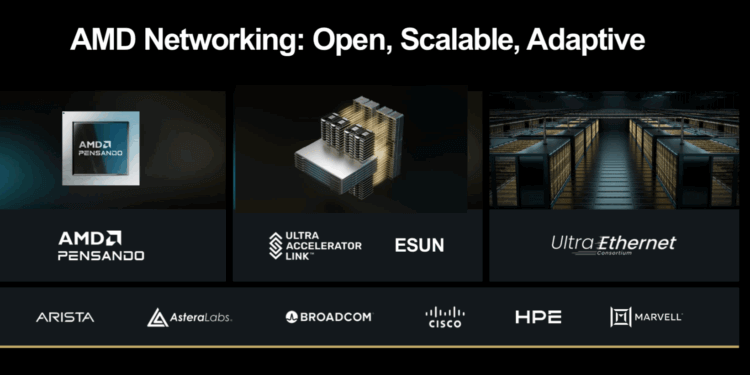

Networking was a central theme of AMD EVP Forrest Norrod’s presentation, which underscored that AI performance is increasingly network-bound. AMD’s Pensando Pollara and Vulcano AI NICs form the connective tissue of its Helios architecture. The Pollara 400 NIC delivers 400 Gbps throughput today, while the upcoming Vulcano NIC will double that to 800 Gbps, enabling Ultra Ethernet-ready communication across massive GPU clusters .

AMD outlined a four-tiered architecture for networking at AI scale:

- Front-End: Connects users, storage, and applications while offloading networking, security, and storage functions from CPUs and GPUs. Pensando’s programmable P4 engines perform concurrent offloads at line-rate speeds with stateful firewall and encryption.

- Scale-Up: Couples hundreds of GPUs into single compute domains with 3.6 TB/s per GPU and shared pod memory—allowing GPUs to act as a unified accelerator.

- Scale-Out: Links hundreds of thousands of GPUs via UEC-ready protocols, improving reliability versus RoCEv2 while lowering total cost of ownership through multipath and packet-spray fabric designs. Internal benchmarks showed up to 58% reduction in switching costs compared to conventional fat-tree networks.

- Scale-Across: Federates geographically distributed data centers into giga-scale systems with intelligent traffic management and adaptive load balancing.

This end-to-end architecture positions AMD to power AI workloads ranging from local inference clusters to global, low-latency model-serving fabrics. AMD’s networking strategy—backed by the UALink Consortium and Ultra Ethernet Consortium—is built entirely on open standards, ensuring interoperability across heterogeneous systems.

ROCm and Developer Ecosystem

The ROCm open software stack remains a cornerstone of AMD’s AI platform strategy. AMD reported a 10× increase in downloads year-over-year, with more than 2 million models now supported on Hugging Face. ROCm now integrates deeply with leading frameworks, including PyTorch, TensorFlow, JAX, Triton, vLLM, ComfyUI, and Ollama, and supports open-source projects like Unsloth for lightweight model optimization. The ROCm 6.4 update adds faster compilation, greater framework compatibility, and new tools for automated kernel generation, further simplifying GPU programming for developers.

Physical AI and Embedded Growth

AMD also expanded on its vision for “physical AI”, where compute moves beyond the cloud into machines, vehicles, and industrial control systems. The embedded division—enhanced by the Xilinx acquisition—has evolved from an FPGA-centric business into a multi-platform growth engine spanning adaptive SoCs, embedded x86 processors, and semi-custom silicon. AMD claims more than $50 billion in design wins since 2022 and expects to exceed 70% market share in adaptive compute.

Its next phase targets co-development programs with automotive, robotics, and industrial partners seeking integrated AI compute pipelines—from perception (sensor fusion and image processing) to decision and actuation. AMD says it’s uniquely positioned to offer this full stack through a single adaptive platform combining CPU, GPU, FPGA, and NPU IP blocks.

Long-Term Outlook

AMD’s data center business remains the primary growth driver, but the company expects expanding margins and diversification across segments. Financial targets include:

- >35% company-wide revenue CAGR and >35% operating margin

- >60% CAGR for the data center segment; >10% CAGR across client, gaming, and embedded

- >50% server CPU market share and >40% client CPU share

- Annual accelerator platform cadence (Helios 2026 → Next-Gen 2027)

Dr. Lisa Su summarized: “With the broadest product portfolio and our deepening partnerships, AMD is uniquely positioned to lead the next generation of high-performance and AI computing. We see tremendous opportunity ahead to deliver sustainable, long-term growth.”

🌐 Analysis: AMD’s 2025 Financial Analyst Day solidified its intent to build a unified compute stack that integrates CPUs, GPUs, networking, and open software—challenging NVIDIA’s closed ecosystem and Intel’s server incumbency. The Helios rack-scale architecture and Vulcano NIC extend AMD’s reach deep into hyperscale networking, while the annual cadence strategy signals execution discipline. Its expansion into “physical AI” through semi-custom silicon could make AMD a key enabler of intelligent edge systems, complementing its strong data-center pipeline.

🌐 We’re tracking the latest developments in semiconductors. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/

🌐 We’re launching the “Data Center Networking for AI” series on NextGenInfra.io. To get involved, contact jcarroll@convergedigest.com or info@nextgeninfra.io.