AMD’s strategy to tightly couple high-memory MI300X GPUs with high-bandwidth Pensando Pollara networking took a major step forward as Zyphra completed large-scale pretraining of the ZAYA1-base foundation model entirely on an AMD platform. The run demonstrates that AMD’s combined compute-network architecture can support frontier-class AI training, delivering more than 750 PFLOPs of max achievable throughput across an IBM Cloud–hosted 128-node cluster co-engineered with Zyphra.

The AMD architecture—192GB HBM per MI300X GPU paired with eight 400Gbps Pollara NICs per node—allowed Zyphra to use a simplified data-parallel strategy while saturating 3.2 Tbps of per-node bandwidth in a rails-only topology. Zyphra published the first systematic collective-communication benchmarks at this scale on the AMD stack, analyzing Pollara’s performance for all_reduce, reduce_scatter, and all_gather operations. Using ROCm components including Primus, AITER, and RCCL, along with custom HIP kernels, the system sustained competitive MoE throughput and maintained robustness through distributed checkpointing and an automated fault-tolerance framework.

This AMD-driven training platform supported the full pretraining of ZAYA1-base, an 8.3B-parameter MoE++ model with 760M active parameters. Architectural features include Compressed Convolutional Attention, which provides an 8× reduction in KV-cache size, and an MLP-based router designed for top-k=1 expert routing. Benchmarks show ZAYA1-base outperforming Llama-3-8B and OLMoE-1B-7B in reasoning, mathematics, and coding, exceeding Gemma3-12B on several math and coding tasks, and matching state-of-the-art 4B dense models such as Qwen3-4B. Zyphra attributes the results to model design choices combined with AMD’s maturing compute, networking, and software stack.

• Platform: AMD Instinct MI300X GPUs (192GB HBM), ROCm stack

• Networking: Eight 400Gbps Pensando Pollara NICs per node; 3.2 Tbps/node

• Cluster: 128 nodes on IBM Cloud; >750 PFLOPs max achievable throughput

• Software: Primus, AITER, RCCL; custom HIP kernels for the Muon optimizer and fused norms

• Benchmarks: First large-scale Pollara collective-communication microbenchmarks

• Model: ZAYA1-base (8.3B parameters; 760M active) trained fully on AMD silicon

• Results: Outperforms Llama-3-8B and OLMoE-1B-7B; exceeds Gemma3-12B in math/coding; competitive with Qwen3-4B

• Infrastructure: Aegis fault-tolerance system; 10× faster distributed checkpointing

“We set out to demonstrate end-to-end large-scale pretraining on the combined AMD platform, and the results show that AMD GPUs and networking provide a robust, competitive alternative for frontier-scale AI,” Zyphra said.

This summary is based on AMD’s technical blog post, available at: https://www.amd.com/en/blogs/2025/zyphra-demonstrates-large-scale-training-on-amd-with-zaya1.html

🌐 Analysis

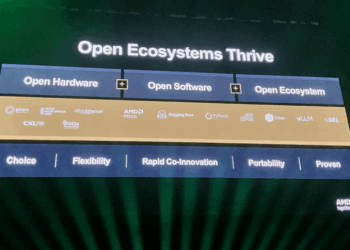

Zyphra’s results strengthen AMD’s case for a vertically aligned compute-and-networking architecture—MI300X GPUs with large HBM footprints paired with Pollara’s multi-hundred-gigabit fabric. This combination positions AMD to compete more directly with NVIDIA’s Hopper/Blackwell + Infiniband/Spectrum-X models for large MoE and dense LLM workloads. The systematic Pollara benchmarks fill a long-standing visibility gap around AMD’s networking capabilities, and IBM Cloud’s involvement shows that cloud operators are beginning to treat MI300X clusters as viable training capacity at scale.

Zyphra is an AI research and infrastructure company headquartered in London, focused on building large-scale Mixture-of-Experts (MoE) foundation models and the distributed training systems required to support them.Founded in 2024 by former Google DeepMind and academic researchers, the company develops its own training stack optimized for efficiency on heterogeneous GPU clusters, with a mission to make frontier-class AI more economically scalable. Zyphra’s core technology includes the ZAYA family of MoE models and a custom software framework for expert routing, parallelism, and high-throughput training. The company’s leadership combines backgrounds in deep learning, large-model systems engineering, and cloud-scale orchestration. Zyphra has reportedly raised early-stage venture funding from European and U.S. investors, though details remain undisclosed, and collaborates with hardware partners such as AMD to demonstrate training performance across different GPU and networking architectures.