At the 2025 OCP Global Summit, AMD CTO Mark Papermaster delivered a powerful keynote emphasizing the transformative impact of open collaboration in the AI infrastructure era. Speaking to a packed audience in San Jose, Papermaster underscored that “AI is taking off exponentially”—with a market projected to surge from $45 billion in 2023 to $500 billion by 2028, a 60% compound annual growth rate.

Papermaster reaffirmed AMD’s deepening engagement with the Open Compute Project (OCP), including the appointment of Robert Hormuth, CVP of Data Center Architecture and Strategy, to the OCP Board of Directors. He credited OCP’s open standards for accelerating innovation across data centers, energy systems, and chip design—calling openness “fundamental to the AI era.”

He described how the AI boom is driving the buildout of gigawatt-scale data centers and sovereign AI facilities worldwide, forcing the industry to focus on total system optimization—power, cooling, and interoperability. “Every wave of computing acceleration,” he noted, “has been powered by open ecosystems. They create speed, scale, and innovation that closed systems cannot match.”

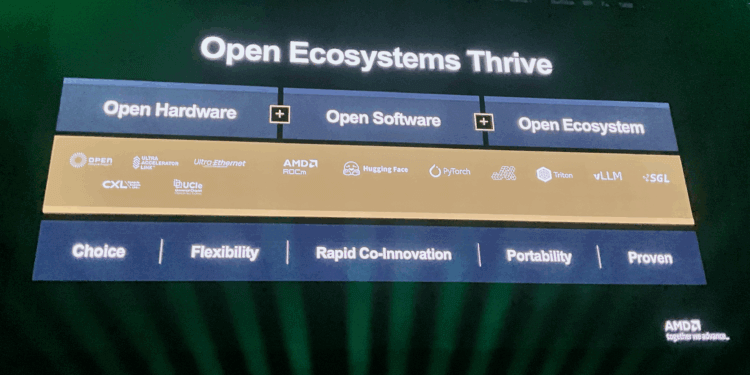

AMD’s open philosophy is embodied in its ROCm software stack, open interconnects, and Helios rack reference design, all built on OCP-aligned standards. Papermaster highlighted AMD’s leadership in multiple open consortia including UALink (scale-up), Ultra Ethernet Consortium (scale-out), and Caliptra (confidential computing)—along with AMD’s ongoing collaboration with Intel on the x86 AI Advisory Group.

Highlights from the AMD OCP Keynote

- AI Market Surge: From $45 B in 2023 to $500 B by 2028, with inference workloads growing at ~80% CAGR.

- OCP Leadership: AMD’s Robert Hormuth joins the OCP Board, strengthening AMD’s contributions to open data center architecture.

- AI Infrastructure Evolution:

- Transition to gigawatt-scale and sovereign AI data centers.

- Industry focus on total cost of ownership and energy-efficient design.

- Open Ecosystem Strategy:

- AMD’s ROCm AI Stack celebrates 10 years in 2026—now powering leading supercomputers and hyperscaler AI clusters.

- Partnerships in the PyTorch Foundation, OpenAI Triton Compiler, and vLLM to improve AI application portability.

- Developer accessibility expanded through ROCm support on Radeon graphics and Ryzen CPUs across Windows and Linux.

- Helios Open Rack:

- AMD’s Helios rack—co-developed with ZT Systems—adopts the new Open Rack Wide specification donated by Meta to OCP.

- Integrates open standards: DC-MHS, CXL, PCIe 6.0, UALink, and OCP attestation for security.

- Open Interconnect Leadership:

- Founding member of UALink (GPU-to-GPU scale-up) using AMD’s Infinity Fabric IP.

- Founding member of Ultra Ethernet Consortium (UEC) for scale-out networks; UEC 1.0 already shipping.

- New collaboration on Ethernet for Scale-Up Networks (ESAN) to enable multiple transport protocols on Ethernet.

- Confidential Computing:

- 5th Gen EPYC CPUs extend trusted I/O to accelerators and peripherals.

- MI400 GPU (2026) to support OCP attestation for end-to-end data protection.

- x86 AI Advisory Success:

- AMD and Intel jointly advancing ISA extensions for vector and matrix math, improving memory safety and interoperability.

“History shows that open ecosystems win. They drive faster progress, broader adoption, and greater trust.

The difference today is speed—AI is moving so quickly that collaboration isn’t just a success factor, it’s fundamental.”

— Mark Papermaster, CTO, AMD