Broadcom introduced Thor Ultra, an 800G Ethernet Network Interface Card (NIC) designed specifically for AI scale-out networking and fully compliant with the open Ultra Ethernet Consortium (UEC) specification. The new NIC targets large AI clusters with hundreds of thousands of XPUs running trillion-parameter workloads.

Thor Ultra represents a major advance in open AI networking by modernizing RDMA for distributed AI systems. The NIC adds multipathing, out-of-order packet delivery, selective retransmission, and programmable congestion control—features that go beyond traditional RDMA capabilities. These UEC-compliant innovations aim to improve load balancing, reduce congestion, and lower job completion times in large-scale training and inference environments.

The NIC supports 800G performance through 200G/100G PAM4 SerDes and integrates security and programmability features such as line-rate encryption, signed firmware, and a programmable congestion control pipeline. It is offered in PCIe Gen6 x16, CEM, and OCP 3.0 form factors. Thor Ultra complements Broadcom’s expanding Ethernet AI portfolio, which includes the Tomahawk 6, Tomahawk Ultra, Jericho 4, and Scale-Up Ethernet (SUE) solutions for high-performance AI fabrics.

Highlights:

• 800G AI Ethernet NIC fully compliant with the Ultra Ethernet Consortium specification

• Advanced RDMA features: packet-level multipathing, out-of-order delivery, selective retransmit

• Supports 200G/100G PAM4 SerDes with long-reach passive copper

• PCIe Gen6 x16 interface with line-rate encryption and device attestation

• Works with Broadcom Tomahawk 5/6 or any UEC-compliant switch

• Available in standard PCIe CEM and OCP 3.0 form factors

• Sampling now to customers

“Thor Ultra delivers on the vision of the Ultra Ethernet Consortium for modernizing RDMA for large AI clusters,” said Ram Velaga, senior vice president and general manager of Broadcom’s Core Switching Group. “It’s the first 800G Ethernet NIC designed from the ground up for the AI era.”

Hasan Siraj, Head of Software Products / Ecosystem at Broadcom, joins Jim Carroll from Converge Network Digest to explore how AI workloads fundamentally differ from traditional cloud traffic and why they demand revolutionary networking approaches.

As GPU clusters scale toward hundreds of thousands of nodes, the networking infrastructure faces unprecedented challenges. Siraj reveals how AI’s “elephant flows” create bottlenecks that can consume 57% of infrastructure time, making network optimization critical for maximizing billion-dollar AI investments. The discussion covers RDMA modernization through the Ultra Ethernet Consortium, programmable congestion control mechanisms, and the technical innovations enabling 128,000 GPU clusters in simplified two-tier topologies.

**What You Will Learn:**

– Why AI workloads require fundamentally different networking approaches than traditional cloud applications

– How RDMA over Ethernet limitations are being addressed for massive GPU cluster deployments

– The role of programmable pipelines in implementing advanced congestion control schemes

– How line-rate encryption maintains security without performance penalties

– Broadcom’s comprehensive strategy for scale-up, scale-out, and cross-data center AI infrastructure

– The importance of multi-vendor ecosystem compatibility in preventing vendor lock-in

📚 CHAPTERS:

0:00:00 – Introduction to AI Networking Evolution

0:01:02 – AI Workload Challenges and Network Performance Requirements

0:03:13 – RDMA Modernization and Ultra Ethernet Consortium

0:05:32 – Performance and Scalability for Large GPU Clusters

0:06:42 – Ecosystem Openness and Multi-Vendor Compatibility

0:08:22 – Security Features and Line-Rate Encryption

0:09:58 – Broadcom’s Complete AI Infrastructure Portfolio

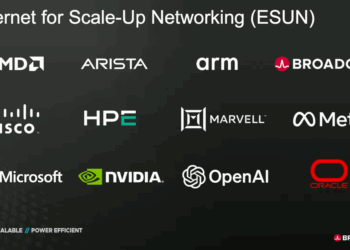

🌐 Analysis: Broadcom’s launch of Thor Ultra reinforces its commitment to open Ethernet for AI infrastructure, contrasting with proprietary interconnect ecosystems. With UEC compliance and integration across the Tomahawk and Jericho product lines, Broadcom positions itself as a central enabler for hyperscalers seeking flexible, standards-based AI networking. The timing also aligns with Broadcom’s expanding collaboration with OpenAI, announced this week, to deliver 10 GW of custom AI accelerator systems.

🌐 We’re tracking the latest developments in networking silicon. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/