Cerebras Systems disclosed progress in its mission to deliver a “brain-scale” AI solution capable of supporting neural network models of over 120 trillion parameters in size.

Cerebras’ new technology portfolio contains four innovations: Cerebras Weight Streaming, a new software execution architecture; Cerebras MemoryX, a memory extension technology; Cerebras SwarmX, a high-performance interconnect fabric technology; and Selectable Sparsity, a dynamic sparsity harvesting technology.

- Cerebras Weight Streaming enables the ability to store model parameters off-chip while delivering the same training and inference performance as if they were on chip. This new execution model disaggregates compute and parameter storage – allowing researchers to flexibly scale size and speed independently – and eliminates the latency and memory bandwidth issues that challenge large clusters of small processors. It is designed to scale from 1 to up to 192 CS-2s with no software changes.

- Cerebras MemoryX is a memory extension technology. MemoryX will provide the second-generation Cerebras Wafer Scale Engine (WSE-2) up to 2.4 Petabytes of high performance memory, all of which behaves as if it were on-chip. With MemoryX, CS-2 can support models with up to 120 trillion parameters.

- Cerebras SwarmX is a high-performance, AI-optimized communication fabric that extends the Cerebras Swarm on-chip fabric to off-chip. SwarmX is designed to enable Cerebras to connect up to 163 million AI optimized cores across up to 192 CS-2s, working in concert to train a single neural network.

- Selectable Sparsity enables users to select the level of weight sparsity in their model and provides a direct reduction in FLOPs and time-to-solution. Weight sparsity is an exciting area of ML research that has been challenging to study as it is extremely inefficient on graphics processing units. Selectable sparsity enables the CS-2 to accelerate work and use every available type of sparsity—including unstructured and dynamic weight sparsity—to produce answers in less time.

“Today, Cerebras moved the industry forward by increasing the size of the largest networks possible by 100 times,” said Andrew Feldman, CEO and co-founder of Cerebras. “Larger networks, such as GPT-3, have already transformed the natural language processing (NLP) landscape, making possible what was previously unimaginable. The industry is moving past 1 trillion parameter models, and we are extending that boundary by two orders of magnitude, enabling brain-scale neural networks with 120 trillion parameters.”

Cerebras unveils 2nd-gen, 7nm Wafer Scale Engine chip

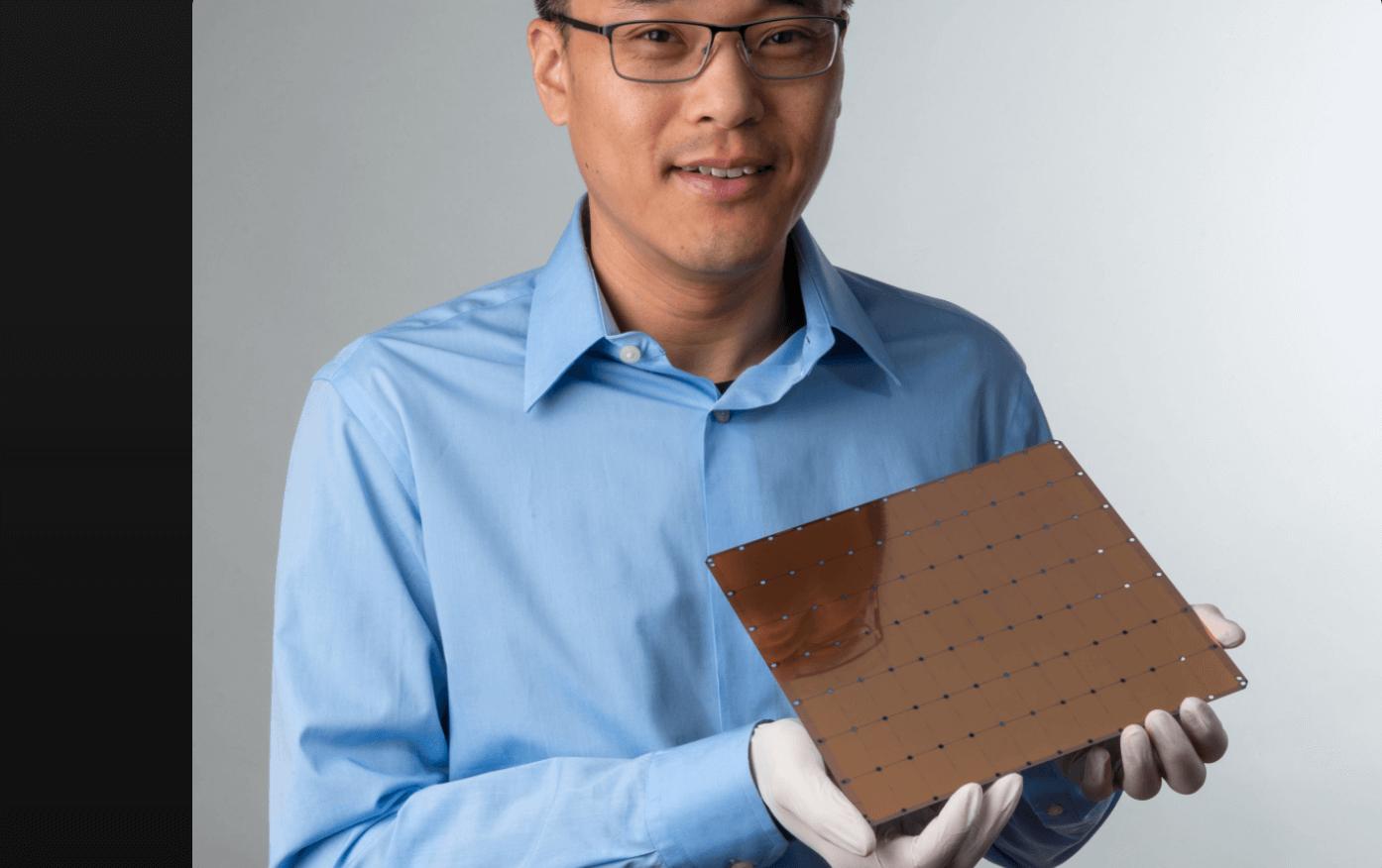

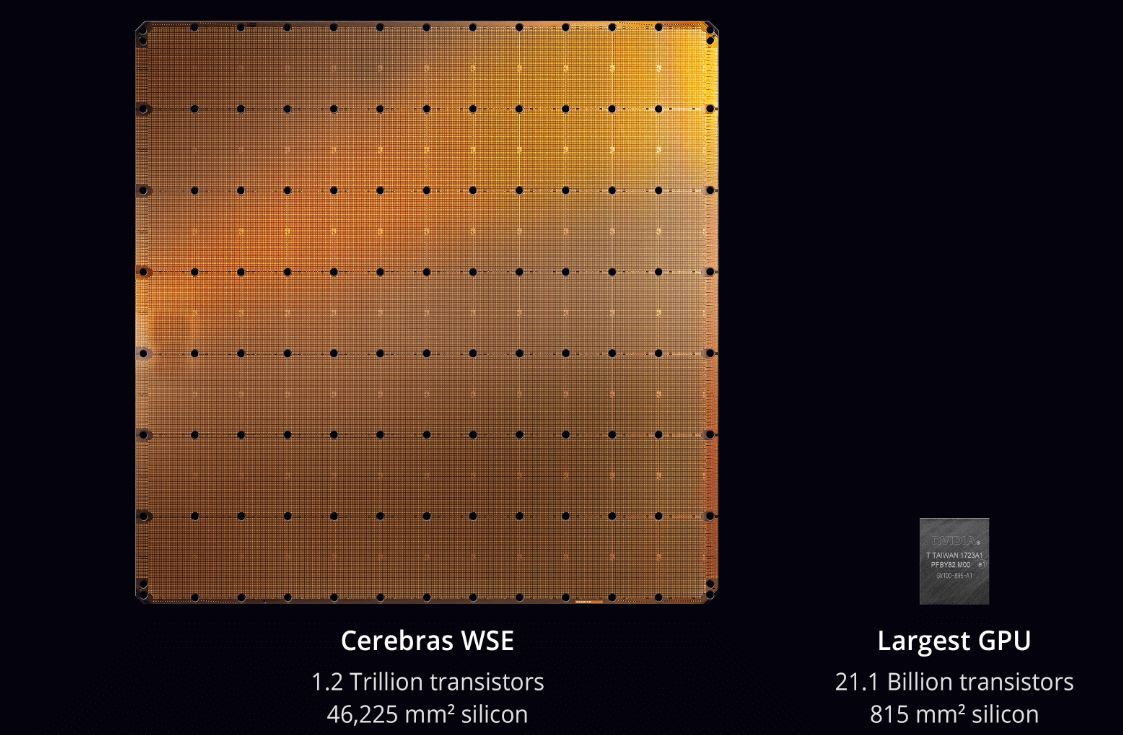

Cerebras Systems introduced its Wafer Scale Engine 2 (WSE-2) AI processor, boasting 2.6 trillion transistors and 850,000 AI optimized cores.

The wafer-sized processor, which is manufactured by TSMC on its 7nm-node, more than doubles all performance characteristics on the chip – the transistor count, core count, memory, memory bandwidth and fabric bandwidth – over the first generation WSE.

“Less than two years ago, Cerebras revolutionized the industry with the introduction of WSE, the world’s first wafer scale processor,” said Dhiraj Mallik, Vice President Hardware Engineering, Cerebras Systems. “In AI compute, big chips are king, as they process information more quickly, producing answers in less time – and time is the enemy of progress in AI. The WSE-2 solves this major challenge as the industry’s fastest and largest AI processor ever made.”

The processors powers the Cerebras CS-2 system, which the company says delivers hundreds or thousands of times more performance than legacy alternatives, replacing clusters of hundreds or thousands of graphics processing units (GPUs) that consume dozens of racks, use hundreds of kilowatts of power, and take months to configure and program. The CS-2 fits in one-third of a standard data center rack.

Early deployment sites for the first generation Cerebras WSE and CS-1 included Argonne National Laboratory, Lawrence Livermore National Laboratory, Pittsburgh Supercomputing Center (PSC) for its groundbreaking Neocortex AI supercomputer, EPCC, the supercomputing centre at the University of Edinburgh, pharmaceutical leader GlaxoSmithKline, and Tokyo Electron Devices, amongst others.

“At GSK we are applying machine learning to make better predictions in drug discovery, so we are amassing data – faster than ever before – to help better understand disease and increase success rates,” said Kim Branson, SVP, AI/ML, GlaxoSmithKline. “Last year we generated more data in three months than in our entire 300-year history. With the Cerebras CS-1, we have been able to increase the complexity of the encoder models that we can generate, while decreasing their training time by 80x. We eagerly await the delivery of the CS-2 with its improved capabilities so we can further accelerate our AI efforts and, ultimately, help more patients.”

“As an early customer of Cerebras solutions, we have experienced performance gains that have greatly accelerated our scientific and medical AI research,” said Rick Stevens, Argonne National Laboratory Associate Laboratory Director for Computing, Environment and Life Sciences. “The CS-1 allowed us to reduce the experiment turnaround time on our cancer prediction models by 300x over initial estimates, ultimately enabling us to explore questions that previously would have taken years, in mere months. We look forward to seeing what the CS-2 will be able to do with more than double that performance.”