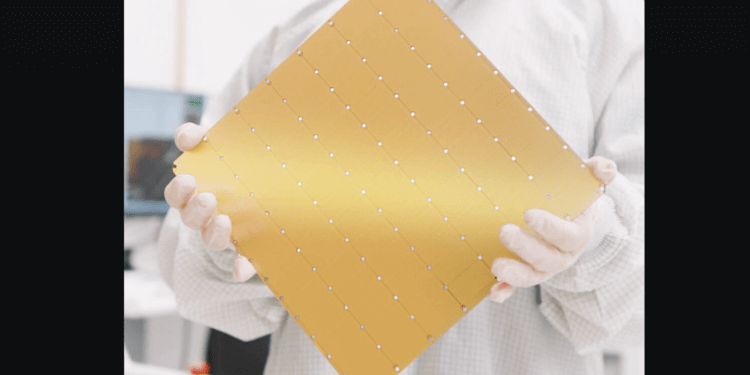

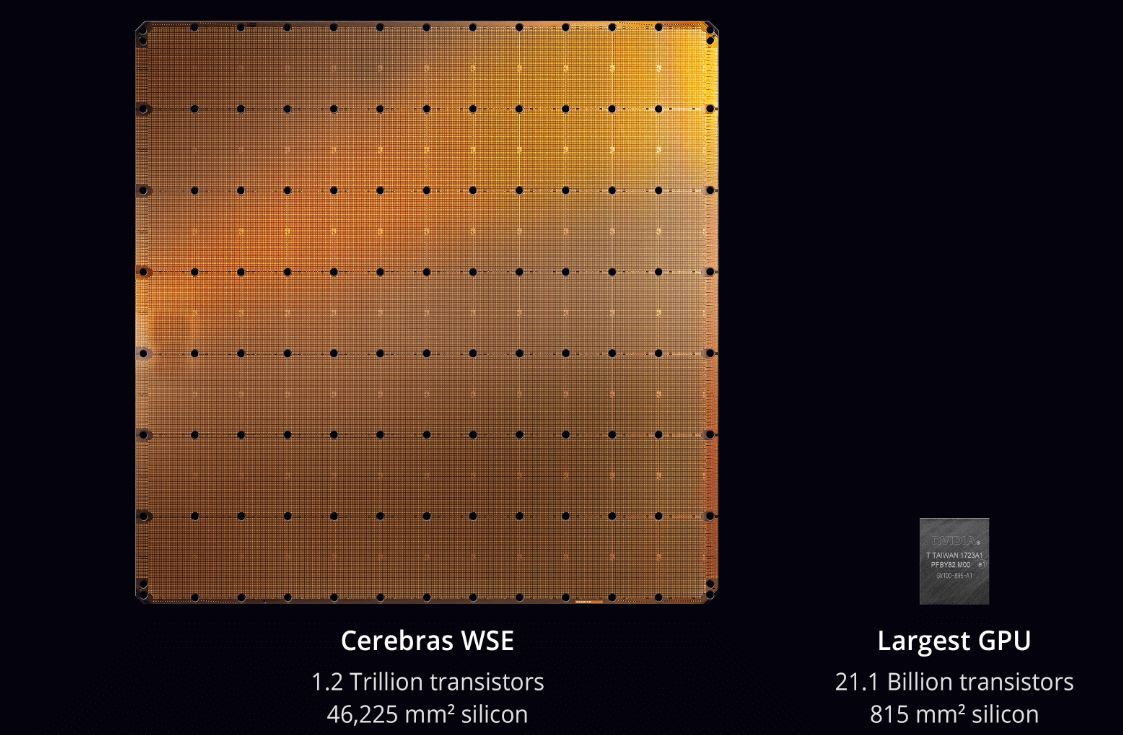

Cerebras Systems has announced the launch of six new AI inference datacenters across North America and Europe, significantly expanding its capacity to support high-speed AI inference. Powered by Cerebras Wafer-Scale Engines (CS-3 systems), these facilities will deliver over 40 million tokens per second, making Cerebras the largest dedicated AI inference cloud provider. The datacenters in Oklahoma City and Montreal will be exclusively owned and operated by Cerebras, while the remaining locations will be jointly operated with strategic partner G42. With 85% of capacity based in the U.S., Cerebras is strengthening domestic AI infrastructure, catering to enterprises, governments, and developers worldwide.

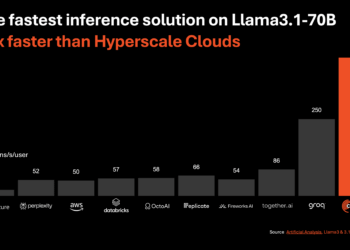

This expansion follows growing demand for Cerebras’ AI inference solutions, with customers such as Mistral AI, Perplexity, Hugging Face, and AlphaSense adopting its platform for ultra-fast model inference. The Oklahoma City Scale Datacenter, launching in June 2025, will house 300+ CS-3 systems in a tornado- and seismically-shielded facility with custom water-cooling technology for large-scale AI workloads. The Enovum Montreal datacenter, set to go live in July 2025, will bring Cerebras-powered AI inference to the Canadian tech ecosystem for the first time, offering 10x faster speeds than leading GPUs. By Q4 2025, additional facilities in the Midwest, Eastern U.S., and Europe will come online, reinforcing Cerebras’ position as a market leader in AI inference.

Key Points:

• Six new Cerebras AI inference datacenters launching across North America and Europe in 2025.

• 40 million+ tokens per second capacity, making it the largest dedicated AI inference cloud.

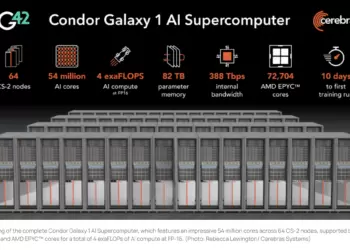

• Exclusive Cerebras-owned facilities in Oklahoma City and Montreal, with others co-operated by G42.

• Major AI customers, including Mistral AI, Perplexity, Hugging Face, and AlphaSense, leverage Cerebras for ultra-fast inference.

• Oklahoma City Scale Datacenter to house 300+ CS-3 systems in a Level 3+ secure, high-efficiency facility.

• Enovum Montreal facility will accelerate AI adoption in Canada, providing 10x faster inference than GPUs.

“Cerebras is turbocharging the future of U.S. AI leadership with unmatched performance, scale, and efficiency. These new global datacenters will serve as the backbone for the next wave of AI innovation,” said Dhiraj Mallick, COO of Cerebras Systems.