At last week’s Optica Photonic-Enabled Cloud Computing (PECC) Summit, Peter Winzer, co-founder of Nubis Communications and now Senior Vice President at Ciena, outlined a practical roadmap for scaling AI infrastructure through co-packaged copper/optical (CPC) sockets. Winzer argued that massive AI systems—stretching to hundreds of thousands of accelerators—can no longer rely on traditional switch-based architectures alone. Instead, he proposed a hybrid electrical-optical topology optimized for low power, high density, and operational simplicity.

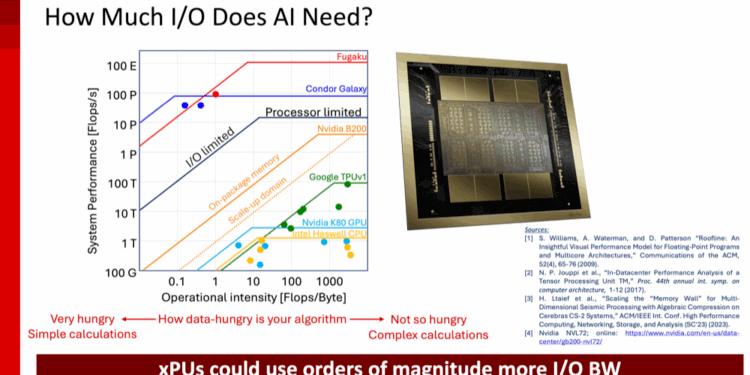

Winzer began with the fundamental question: “How much I/O does AI really need?” Using models like NVIDIA’s NVL72 and Cerebras Condor Galaxy, he illustrated that today’s AI processors (xPUs) already demand terabits per second of bandwidth and that this need will grow by orders of magnitude. To interconnect 500 or more xPUs within a rack or small cluster, the interconnect must deliver tens of terabits per device—without blowing past the rack’s power and cooling limits.

Key insights from the presentation:

• Mixed-media networks – AI clusters require a mix of active and passive copper, linear and retimed optics, depending on distance and scale. “Use copper when you can, optics when you must,” Winzer said.

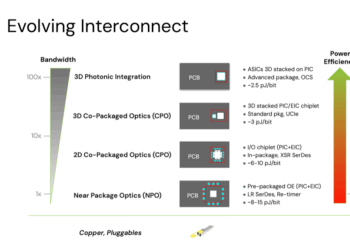

• Power efficiency – Avoiding retimer DSPs is critical. Ciena modeling shows that retimer-based 1.6T optics can consume 30 W per port, while linear 1.6T optics operate near 10 W and active copper around 2.5 W. Across 600 K-GPU clusters, linear architectures could cut network power from 1.7 GW to 600 MW, saving $3.8 billion over five years.

• I/O densification – Achieving SerDes-level density (≈1.5 Tbps/mm) eliminates long traces and the need for retimers. High-density linear I/O enables compact, low-latency system designs.

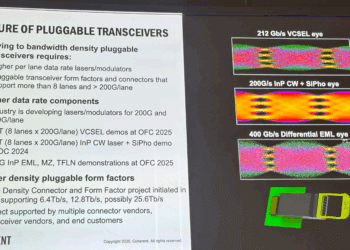

• Co-packaged copper/optics (CPC) – Ciena’s 6.4 Tbps removable CPC connector combines electrical and optical links in one socket. It’s retimer-free, supports 5 pJ/bit efficiency, and is compatible with both copper and optical media—creating a unified interface for AI systems.

• High-density front panel pluggables – Using the same building blocks, Ciena’s 1 RU design supports up to 400 Tbps (1024×400 G), four times the density of today’s OSFP modules.

Winzer emphasized that AI scale-up networks must evolve beyond rigid topologies and proprietary packaging. “Operationalizing AI systems means designing interconnects that are power-efficient, serviceable, and multi-vendor by default,” he said. “CPC sockets give us a common physical interface that unifies copper and optics under one roof.”

Now part of Ciena, the Nubis team continues to advance low-power optical interconnects for large-scale AI and data-center fabrics. The hybrid CPC architecture bridges near-term manufacturability with long-term scalability—paving the way for clusters that can grow from a few racks to exascale without rearchitecting the network core.

www.ciena.com