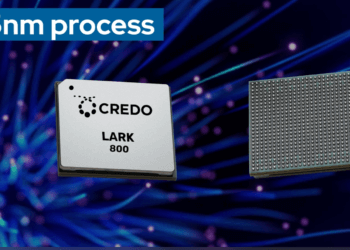

Credo Technology Group has launched Weaver, a memory fanout gearbox engineered to break through the performance barriers of AI inference workloads. Weaver is the first product in Credo’s new OmniConnectfamily, addressing the growing need for scalable, energy-efficient memory architectures in data centers. The solution targets xPUs and AI accelerators constrained by traditional LPDDR5X and GDDR memory systems, delivering up to 6.4TB of memory capacity and 16TB/s of bandwidth using advanced 112G very short reach (VSR) SerDes technology.

AI inference workloads are increasingly limited by memory density and throughput rather than compute capability. Weaver’s fanout gearbox architecture delivers 10x higher I/O density than conventional designs, combining high performance with lower cost and energy efficiency compared to HBM-based systems. It also supports flexible DRAM packaging and late binding, enabling AI system builders to tune configurations as model requirements evolve. Designed for long-term compatibility, Weaver supports migration to next-generation memory protocols and includes telemetry and diagnostics for enhanced reliability.

Weaver is available for design-in now, with full product availability expected in the second half of 2026. The OmniConnect 112G VSR interface enables seamless integration into high-bandwidth AI infrastructure. Credo will detail the new technology during a November 10 webinar titled “Breaking the Memory Wall: Scaling AI Inference with Innovative Memory Fanout Architecture.”

- Weaver enables up to 6.4TB of LPDDR5X memory and 16TB/s bandwidth

- Leverages 112G VSR SerDes for up to 10x higher I/O density

- Overcomes limitations of HBM cost, power, and supply constraints

- Supports flexible DRAM packaging and telemetry for reliability

- General availability expected in 2H 2026

“Weaver is designed to deliver the flexibility and scalability required for future AI inference systems,” said Don Barnetson, Senior Vice President of Product at Credo. “This innovation empowers our partners to optimize memory provisioning, reduce costs, and accelerate deployment of advanced AI workloads.”

🌐 Analysis: Credo’s Weaver marks a significant expansion from its Ethernet and SerDes roots into the AI memory subsystem—one of the biggest bottlenecks in inference scaling. As hyperscalers push toward ever-larger models, optimizing DRAM-based architectures could offer a lower-cost and more power-efficient alternative to HBM. Credo’s move puts it in competition with emerging players like Eliyan and Astera Labs that are also tackling AI memory bandwidth challenges. If OmniConnect delivers as promised, Credo could become a central player in the next generation of AI system design.

🌐 We’re tracking the latest developments in networking silicon. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/