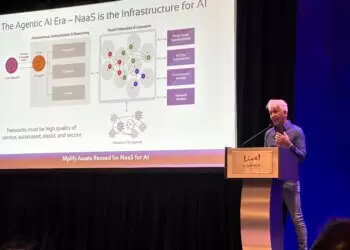

The next phase of the cloud will be defined not by where compute happens, but by how networks dynamically interconnect AI regions, GPUs, and data fabrics at terabit scale.

Speaking at Mplify’s Global Network Executive (GNE) Conference in Dallas, Ethan Blodgett, Senior Vice President at Lumen Technologies, outlined the company’s vision for what it calls Cloud 2.0 — a transformation of the cloud and enterprise core built around five new capabilities designed to support the exponential demands of AI workloads.

“Legacy networks were built for apps and employees, not for AI-heavy workloads,” said Blodgett. “Cloud 2.0 represents a fundamental reinvention of the network fabric — one that scales from 400G to 1.6T, connects distributed AI regions, and allows enterprises to design and control their networks without owning infrastructure.”

Five Foundational Capabilities of Cloud 2.0

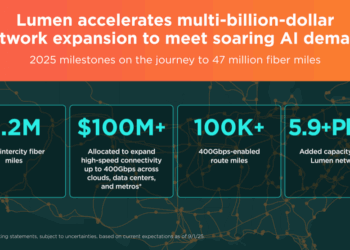

1. Extreme Bandwidth and Low Latency – Scaling links from 400G toward 1.6T to keep GPUs fully utilized and minimize idle time.

2. Data Center Interconnect (DCI) as a Foundational Element – Powering a multi-cloud fabric that links AI training, inference, and SaaS environments.

3. Expansion into AI Corridors – Extending fiber and power infrastructure into new “AI regions” beyond traditional metro hubs.

4. Distributed On-Ramps – Programmable, high-bandwidth access points for AI and cloud traffic, enabling on-demand data movement.

5. Programmable, API-First Networks – Delivering flexible, on-demand connectivity through marketplace APIs.

From Cloud 1.0 to Cloud 2.0: A Redrawn Network Map

Blodgett contrasted Cloud 1.0’s hub-and-spoke topology—centered on Tier-1 metros and gigabyte-scale workloads—with Cloud 2.0’s any-to-any programmable fabric supporting exabyte-scale transfers between “AI factories.”

This new model replaces static point-to-point circuits with distributed DCI fabrics that include virtual meet-me rooms, multi-cloud app fabrics, and private AI connectivity linking hyperscalers, data-center operators (QTS, Equinix, Digital Realty), and enterprise AI nodes.

“We’re seeing densification in existing metros like Ashburn, Dallas, and Phoenix,” Blodgett noted. “But the next wave will bring diversification — AI corridors expanding into regions with available power, water, and land.”

Lumen projects a tenfold expansion in global data-center footprint by 2030 — from roughly 120 million square feet today to more than one billion square feet — driven by AI compute, data mobility, and interconnect demand.

A New Role for Enterprises: Design Without Owning

Blodgett predicted the end of the “DIY era” for enterprise networks. In Cloud 2.0, companies will orchestrate multi-cloud and AI connectivity through a single programmable platform, without managing any physical assets.

“The programmable fabric we’re building is the new Internet,” said Blodgett. “Data-center interconnects and AI on-ramps will become the digital backbone of the AI economy.”

Analysis

Lumen’s Cloud 2.0 initiative positions the network as a strategic enabler of AI infrastructure, not a passive transport layer. The company estimates over $500 billion in network investment will be required this decade to match the pace of AI compute and power buildouts.

By combining high-capacity optical networks, distributed on-ramps, and API-driven programmability, Lumen aims to transform connectivity into an intelligent, revenue-generating service fabric — one capable of connecting the emerging “AI factories” of the 2030s.

🌐 We’re tracking the rise of next-generation cloud and AI network fabrics.

Follow our ongoing coverage at: https://convergedigest.com/category/data-centers

About the Speaker

Ethan Blodgett serves as Senior Vice President at Lumen Technologies, where he leads global initiatives around next-generation cloud, fiber, and AI infrastructure. His team is driving the evolution of Lumen’s programmable network fabric and data-center interconnect strategy — core components of what the company defines as Cloud 2.0.