Celestial AI’s CTO Phil Winterbottom took the stage at IEEE Hot Chips 2025 at Stanford University to detail the company’s Photonic Fabric roadmap, expanding on concepts introduced earlier this month at the Hot Interconnects conference. While the HoTI25 talk emphasized the launch of the Photonic Fabric Link (PFLink) and its first module and appliance, Winterbottom’s Hot Chips session dug deeper into scaling laws, packaging, and the thermal realities of 500W–900W GPUs.

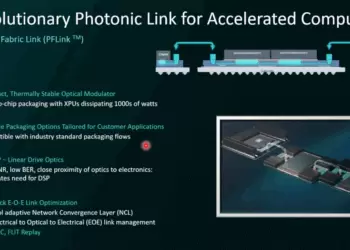

Celestial’s platform integrates silicon photonics directly into 2.5D interposers and bridges using TSMC CoWoS, enabling optical I/O within dies, between chiplets, and across packages. The company highlighted its choice of electro-absorption modulators (EAMs) over ring modulators, citing tolerance to >85 °C swings and resilience to rapid temperature excursions of up to 200 °C/s. Custom analog-only SerDes deliver ~2× better efficiency than DSP-based approaches, consuming ~2.4 pJ/bit for electronics and 0.7 pJ/bit for lasers. Winterbottom stressed how this approach avoids the “beachfront I/O” bottleneck, allowing optical I/O anywhere on the die.

Key new elements at Hot Chips included the Optical Multi-Chip Interconnect Bridge (OMIB), which embeds photonics into package bridges to support in-die optical connections, potentially dropping memory transactions directly into cache and reducing on-die travel latency. Winterbottom also described a photonic switch architecture that relocates I/O to the chip center while freeing die perimeter for HBM and DDR PHYs. Celestial cited measured latencies of ~100 ns for NIC-to-NIC paths and <200 ns for chip-to-memory transactions. The company has completed four TSMC 5/4nm tapeouts of its EICs, demonstrating pre-FEC BER <1e-8 at -10 dBm OMA input with WDM.

- Photonic Fabric Link integrates optics into interposers and bridges for in-die, chiplet, and package-to-package I/O

- Electro-absorption modulators chosen over rings for thermal stability and slew-rate tolerance in 500W+ GPUs

- Analog-only SerDes deliver ~2× power efficiency versus DSP-based designs

- OMIB packaging structure supports optical cache access and multi-die GPU scaling

- Latencies measured: ~100 ns NIC-to-NIC, <200 ns chip-to-memory

- Builds on HoTI25 debut of Photonic Fabric modules and appliance (7.2 Tbps per module, 115 Tbps per 2U system)

“Our roadmap enables co-packaged optics today and evolves to full in-die optical I/O for tomorrow’s largest processors,” said Phil Winterbottom, CTO of Celestial AI.Co-packaged optics are essential in data center applications where the demand for bandwidth is ever-increasing, and the ability to bundle optics and electronics can significantly reduce energy consumption and space requirements.

“In-die” optical I/O is an even more advanced technology that aims to integrate optics directly into a microprocessor’s silicon, effectively eliminating the need for electrical I/O and its associated energy inefficiencies.

“In-die optical I/O has the potential to truly revolutionize the microprocessor industry, enabling processors that are faster, more efficient, and less energy-intensive than ever before,” said Winterbottom. “We’re excited to be at the forefront of this technology and look forward to seeing its impact across various industries over the coming years.”

Celestial AI is at the forefront of this technology development with its innovative roadmap for adoption, ensuring that it remains relevant and competitive in an industry that values high performance and energy efficiency. As part of this plan, the company intends to work closely with industry partners and clients to ensure the technology meets their needs and expectations, and to leverage their expertise in facilitating the transition to this new era of processor technology.

By taking a future-focused approach, Celestial AI not only demonstrates innovation in technology but prepares for a future where efficiency and performance are inseparable, creating a path towards more sustainable data center operations.

🌐 Analysis: By placing optical I/O inside the die and into package bridges, Celestial is tackling scaling problems that conventional co-packaged optics cannot. The Hot Chips presentation highlights how thermal dynamics, cache-level integration, and multi-die GPUs demand a different architectural approach. Together with the earlier HoTI25 debut of its Photonic Fabric modules, Celestial is positioning itself against peers like Ayar Labs and Lightmatter with a packaging-driven, analog-centric model that could reshape AI memory and interconnect design.

Readers can view our earlier coverage of Celestial AI’s Hot Interconnects 2025 presentation for additional context: https://convergedigest.com/hoti25-celestial-ais-photonic-fabric/

🌐 We’re tracking the latest developments in AI infrastructure and optical interconnects. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/