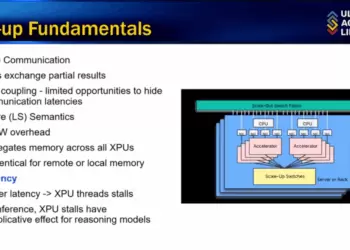

AI supercomputers are hitting a wall—not in compute, but in moving data fast enough. At today’s Hot Interconnects online event, Lightmatter CEO Nick Harris argued that scaling AI clusters into “million-XPU brains” requires a radical new interconnect architecture. Copper and pluggables are running out of headroom, leaving photonics as the only path forward.

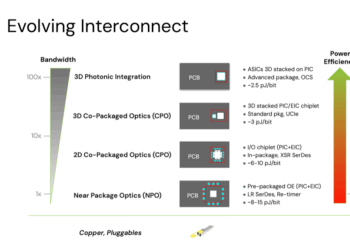

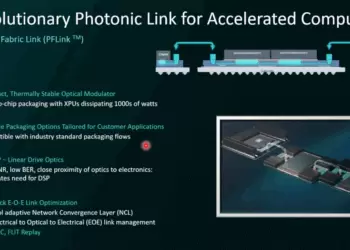

Harris outlined the limits of today’s approaches: copper ties together only about 100 chips, Gen 1 pluggables consume 17–20 pJ/bit, and near-package optics (Gen 2) lower that to 8–15 pJ/bit. Gen 3 2D CPO pushes further to 6–10 pJ/bit but still contends with shoreline limits around the chip perimeter. Lightmatter’s Gen 4 architecture replaces the shoreline with a 3D photonic interposer—stacking ASICs, switches, and GPUs directly on photonics. Demonstrations have already reached as low as 3 pJ/bit, below the long-standing 5 pJ/bit industry target.

Lightmatter presented new results showing the first 16-wavelength bi-directional optical link delivering 800 Gbps per fiber with error rates below 10^-10 without forward error correction. The system remained stable over a kilometer of fiber, six connectors, and polarization scrambling. At the core are microring modulators only 15µm in diameter, delivering extreme density and power efficiency compared to Mach-Zehnder and electro-absorption designs. Lightmatter claims these rings can operate stably from 0°C to 105°C while supporting both O- and C-band transmission.

- Copper-based scale-up hits ~100 chips; photonics needed for million-XPU clusters

- Gen 4 3D photonic interposer achieves <5 pJ/bit; roadmap to 3 pJ/bit

- Demonstrated: 800 Gbps per fiber, 16λ bi-directional, BER <10^-10, 1 km reach

- Microring modulators: 10–1000x smaller than rivals, ultra-low drive power

- Standards support up to 224 Gbps per lane; 800 Gbps and 1.6 Tbps per fiber shipping today

“We’re already showing stable operation at less than 5 pJ/bit across kilometers of fiber, which is a dream target for the industry,” said Nick Harris, CEO of Lightmatter.

🌐 Analysis: Lightmatter’s 3D stacking approach bypasses the shoreline bottleneck that constrains 2D CPO. By embedding optics beneath compute, the company addresses both bandwidth and radix scaling simultaneously—critical for trillion-parameter AI workloads. Competitors such as Ayar Labs and OpenLight are pursuing different paths in optical I/O, but Lightmatter’s microring-based density and 3D photonic interposer integration give it a distinctive position. With hyperscalers rushing to deploy AI factories at gigawatt scale, adoption of ultra-efficient photonic fabrics could determine which architectures dominate in the late 2020s.