AI workloads are pushing the limits of copper and conventional optics, and Celestial AI believes its Photonic Fabric is the key to breaking through. At this week’s IEEE Hot Interconnects online conference, senior director Ravi Mahatme detailed how the company’s technology embeds optical I/O directly into the middle of silicon dies, bypassing shoreline constraints to deliver nanosecond-class latency, 2.4 pJ/bit efficiency, and terabits of bandwidth per link. The approach, he argued, enables scale-up architectures and unified memory access that are critical for accelerated computing.

Celestial AI’s Photonic Fabric is built as a platform, spanning chiplets, IP blocks, NICs, and system-level modules. The Photonic Fabric Link (PFLink) provides compact, thermally stable optical modulators integrated into advanced 5nm/4nm CMOS packaging. Unlike traditional optical approaches, the link operates without DSP, relying on linear drive optics to maintain high SNR and low BER. The Photonic Fabric chiplet supports 4.4 Tbps of bandwidth—greater than a single HBM3E stack—and is protocol adaptive, supporting AXI, HBM/DDR, UAL, and CXL.

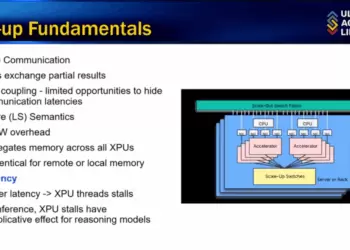

At the system level, the Photonic Fabric Module (PFM) integrates two 36 GB HBM3E stacks, eight DDR5 channels (2 TB capacity), and an 8 Tbps scale-up switch. Sixteen such modules can be assembled into an appliance that offers 115 Tbps of backend switching and 32 TB of unified DDR5 memory, with HBM acting as a write-through cache. Latency from server to memory is ~300 ns, compared with tens of microseconds for conventional networks. Celestial says this architecture reduces sharding, improves throughput, and enables collective communications across XPUs with deterministic tail latencies at p99 and p99.9.

Applications include XPU-to-XPU scale-up, KV cache offload, vector database and embeddings serving, recommendation systems, and long context-length inference for large AI models. For developers, Celestial offers PF-NIC add-in cards supporting CXL 3.0/2.0 over PCIe Gen6, delivering 2 Tbps per card and enabling low-latency server access to PF appliances. Software complements the hardware with link management, telemetry, and predictive insights for fleets of interconnected modules.

• Photonic Fabric efficiency: ~2.4 pJ/bit, nanosecond-class latency

• PFLink: compact, thermally stable optical modulator integrated in-die

• No DSP: linear drive optics maintain SNR/BER without power-hungry DSP blocks

• Photonic Fabric Chiplet: 4.4 Tbps bandwidth, UCIe/proprietary PHY support

• Photonic Fabric Module: 72 GB HBM3E, 2 TB DDR5, 8 Tbps switching, 7.2 Tbps bandwidth per module

• PF Appliance: 16 modules = 115 Tbps switching, 32 TB DDR5 shared memory

• PF-NIC: CXL 3.0/2.0 support, 2 Tbps over PCIe Gen6 per card

• Use cases: XPU scale-up, MoE inference, KV cache offload, embeddings serving, long context AI, checkpointing/weight streaming

• Benefits: reduced sharding, deterministic latency, throughput gains, new application enablement

“Photonic Fabric delivers transformative benefits by reducing tail latency and enabling new AI applications, especially those with long context lengths that are difficult or impossible today,” Mahatme said.

🌐 Analysis: Founded in 2020, Celestial AI is headquartered in Santa Clara, California, specializing in optical interconnect technology to enhance AI infrastructure. The company’s leadership includes co-founder and CEO David Lazovsky, previously the founder and CEO of Intermolecular Inc. and a venture partner at Khosla Ventures; co-founder and COO Preet Virk, who has extensive semiconductor industry experience in operational and business development roles; and CTO Philip Winterbottom, whose background includes leadership roles in developing advanced computing systems. In October 2024, Celestial AI strengthened its technology portfolio by acquiring a significant silicon photonics patent collection from Rockley Photonics.

In March 2025, Celestial AI announced it secured $250 million in Series C1 funding led by Fidelity Management & Research Company, increasing its total funding to over $515 million. The funding round included new investors such as BlackRock, Maverick Silicon, Tiger Global Management, and Lip-Bu Tan, alongside existing investors AMD Ventures, Koch Disruptive Technologies, Temasek, Xora Innovation, Porsche Automobil Holding SE, and The Engine Ventures.

🌐 We’re tracking the latest developments in networking silicon. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/