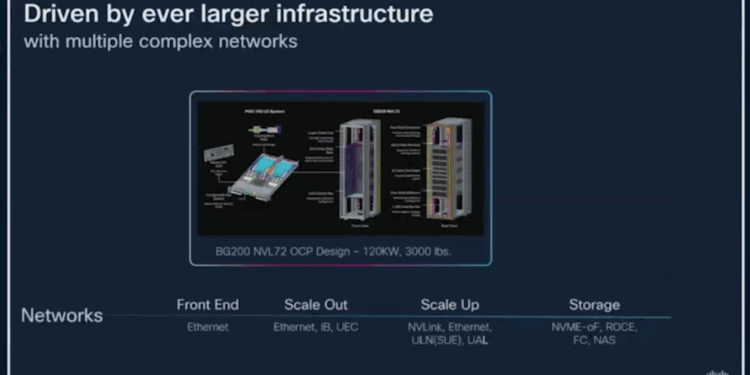

At HOTI25, Will Eatherton, VP at Cisco, highlighted the mounting complexity of networking in large-scale AI clusters and Cisco’s vision for simplifying operations. Much of the discussion in the industry, he noted, has focused on the extraordinary size and power requirements of today’s AI systems, with racks like NVIDIA’s NVL72 design drawing up to 120 kW and weighing over 3,000 pounds. But Eatherton argued that the more pressing challenge lies in coordinating the many overlapping network fabrics that must come together to make these clusters function reliably.

Eatherton described the proliferation of network domains that serve different roles in AI infrastructure. Front-end networks, traditionally Ethernet-based, are now carrying growing amounts of east-west traffic as AI agents and scale-out applications expand. Scale-out fabrics link GPUs across nodes using Ethernet, InfiniBand, and UEC. Scale-up networks, employing NVLink, Ethernet, ULN (SUE), and UAL, provide ultra-high-bandwidth connections within a rack. Storage networking, once a separate domain, is converging with compute fabrics as NVMe-oF, RoCE, Fibre Channel, and NAS are pressed into higher-performance AI-driven roles.

- Front End: Ethernet

- Scale Out: Ethernet, InfiniBand, UEC

- Scale Up: NVLink, Ethernet, ULN (SUE), UAL

- Storage: NVMe-oF, RoCE, Fibre Channel, NAS

The convergence of these domains increases operational risk. Eatherton warned that a single interface failure or miswired connection can ripple across an entire cluster, leading to costly downtime. Compounding this, AI clusters operate on much shorter lifecycles than traditional data center infrastructure. Whereas enterprise networks have typically refreshed every seven to ten years, the expectation now is that clusters will turn over in three years or less, raising the stakes for multi-generational compatibility across fabrics, power, and cooling.

Cisco’s strategy for addressing these challenges is to unify network operations through a common operating system and controller framework. The company is extending NX-OS and IOS-XR across domains, while also investing heavily in SONiC for hyperscale, new cloud, and select enterprise customers. A unified controller is envisioned to span front-end, scale-out, and scale-up networks, while enhanced functions in the Nexus Dashboard will deliver cluster-level troubleshooting and analytics. Cisco is also developing Nexus HyperFabric AI, a full-lifecycle management system for AI clusters, encompassing design, deployment, cabling, and ongoing operations.

Eatherton closed by stressing that networking is what transforms a GPU or server into a usable cluster, and that manageability must be engineered into these systems from the start.

🌐 Analysis

Cisco’s presentation underscores a broader theme at HOTI25: the move from focusing solely on raw performance to addressing operational complexity. AI clusters are no longer just about compute density or power draw—they are multi-layered ecosystems of interconnected fabrics. Cisco is betting that customers will value integrated management that reduces risk and accelerates deployment. With its deep enterprise footprint and investment in SONiC for hyperscalers, Cisco is positioning itself as the vendor that can bridge both worlds: traditional enterprise networks and the emerging fabric complexity of AI.