At this week’s IEEE Hot Interconnects conference, Meta outlined the extraordinary scale and technical challenges of building one of the world’s largest AI infrastructures. Wes Bland, Research Scientist at Meta AI, described how the company is deploying GPU clusters numbering in the hundreds of thousands, custom silicon for inference, and advanced networking fabrics to power workloads ranging from recommendation engines to generative AI models such as Llama.

Meta’s AI infrastructure already spans dozens of global data centers, with facilities now reaching into the multi-gigawatt range. A flagship example is the new Prometheus supercluster in Ohio, which will rival the footprint of Manhattan in compute density. Bland noted that Meta’s GPU fleet now exceeds 600,000 H100-equivalents and is supplemented by the company’s internally designed MTIA accelerator, which delivers more than three times the compute density of earlier inference chips.

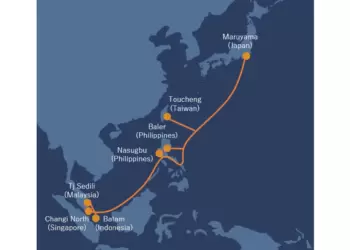

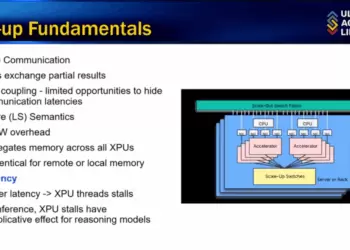

Networking remains a critical bottleneck at this scale. Meta has developed its own Disaggregated Scheduled Fabric (DSF) to provide near-optimal load balancing and credit-based traffic control, addressing the elephant flows and oscillations that strain conventional approaches. Physical scale also presents unique challenges: GPUs must be spread across large distances within a data center due to power limitations, creating latency and ordering issues that require transports more flexible than InfiniBand.

The scale of training continues to surge. Llama 4, for example, was trained on 30 trillion tokens—double the volume of Llama 3. Meta’s Tectonic data pipeline supports exabyte-scale flows, while topology-aware scheduling ensures efficient use of compute clusters.

- Meta’s GPU fleet now exceeds 600,000 H100-equivalents

- MTIA inference chip delivers 3.5× denser compute, 7× sparse compute efficiency

- New Prometheus supercluster in Ohio will be among the largest data centers ever built

- Disaggregated Scheduled Fabric addresses congestion, low-entropy traffic, and elephant flows

- Power efficiency pursued through GPU optimization, optical interconnects, and silicon co-design

Bland stressed that power is the scarcest resource in this environment, requiring co-optimization across hardware, software, and infrastructure. The company is actively hiring engineers and researchers to push these limits further.