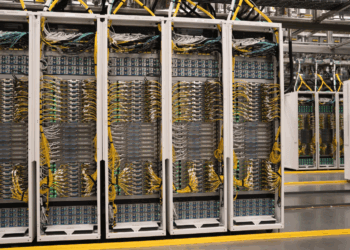

Microsoft is building out AI-scale networking with more than $100 billion in near-term investment, said Deepak Bansal, Corporate VP, during a keynote at the IEEE Hot Interconnects conference. He described AI clusters now exceeding 100,000 GPUs, creating “hundreds of thousands of potential points of failure” that demand defect-free builds, automation, and real-time telemetry across Ethernet and InfiniBand interconnects.

Bansal outlined Microsoft’s evolving network architecture, including DPUs for GPU-to-storage networking with throughput above 12.8 Tbps, “smart switches” for bare-metal virtualization, and automated fabric health monitoring across distributed data centers. Azure Managed CNI, built on eBPF, supports AI workloads with flexible IP routing, fine-grained security policies, and open-source observability via Retina. Microsoft’s AI appliance model integrates distributed GPUs into Azure, connecting securely to storage through Private Link and policy-enforced SDN.

Security remains central. Microsoft is enforcing network security perimeters with unified firewalls, egress controls, and mandatory Private Link for AI storage. Application networking for AI follows four imperatives: scale/performance, security, observability, and operational simplicity. Global reach is supported by 60+ Azure regions, 275,000 miles (442,500 km) of fiber, >2 Pbps of WAN capacity, and 200 Tbps of peering. Finally, Bansal detailed AI-aware load balancing: an application gateway combining advanced load balancing, semantic caching, prompt transformation, and ORCA metrics ingestion to optimize inference workloads across models such as GPT, LLaMA, and Claude.

- AI clusters now scale to 100k+ GPUs; automation and telemetry are required for defect-free operation

- Smart switches based on DPUs support bare-metal virtualization across compute, storage, and AI clusters

- Fabric health: automated streaming telemetry, defect analysis, and AI-based mitigation

- Azure Managed CNI (eBPF) + Retina observability deliver multi-cloud scale with >1M IPs supported

- AI appliances integrate distributed GPUs into Azure via Private Link and SDN enforcement

- Security perimeter: unified firewalls, granular access control, and egress protections

- WAN scale: 2 Pbps+ backbone, 200 Tbps peering, 40k+ interconnects across 60+ regions

- AI load balancer: semantic cache, prompt transformer, ORCA telemetry, advanced L7 policies

“We are on our mission to build the AI infrastructure for the world,” Bansal said. “Very soon, there will not be any app that is not AI-enabled. If an app is not AI-enabled, it will be called a legacy app.”

🌐 Analysis: Microsoft’s keynote shows how AI infrastructure is driving full-stack networking innovation—from optics and WAN capacity to DPUs, SDN, and security models. Its eBPF-based Azure CNI and smart load balancer stack extend Kubernetes observability to AI. The move mirrors Nvidia’s DPU-powered BlueField networking, Google’s TPU pod fabrics, and AWS’s Elastic Fabric Adapter, underscoring the hyperscaler arms race to interconnect hundreds of thousands of accelerators at petabit scale.