At today’s Hot Interconnects online event, Nubis Communications CTO Peter Winzer warned that AI and HPC clusters are increasingly I/O bound, with interconnect performance unable to keep pace with compute. Winzer, a former Bell Labs researcher with over 100 patents and 500 papers, introduced Co-Packaged Optics Extensions (CPX) as a pragmatic path to bring co-packaged optics into mainstream deployment.

Winzer outlined the widening gap: switch capacity is growing 40% per year, but SerDes I/O per lane only 20% per year. The result is GPUs starved for bandwidth, even as AI cluster sizes surge to hundreds of thousands of accelerators. “Every AI system could use several orders of magnitude more I/O,” Winzer said, framing the issue with a roofline model that showed systems increasingly limited by data movement rather than compute.

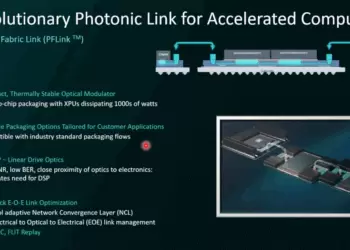

Nubis proposes CPX as a connectorized co-packaged copper-and-optics solution that extends the proven pluggable ecosystem inside the package. Each CPX module delivers 6.4 Tbps at 5 pJ/bit, compatible with existing CPC sockets, and supports multi-vendor interoperability. By relying on retimer-free linear optics, CPX avoids the 30W-per-module power hit of DSP-based retimed optics, enabling hyperscale operators to cut interconnect power by more than 1 GW in 600,000-GPU clusters. Winzer also stressed that single-wavelength I/O with fiber shuffles outperforms WDM for AI: lower loss, lower cost, and simpler full fan-out connectivity.

- Switch capacity grows 40%/year vs SerDes I/O at 20%/year → bandwidth starvation

- Retimer-free linear optics reduce interconnect power from 1.7 GW to 600 MW in large clusters

- CPX delivers 6.4 Tbps per connector at 5 pJ/bit, aligning with SerDes I/O roadmaps

- Connectorized co-packaging supports multi-vendor interoperability

- Single-wavelength I/O with fiber shuffles preferred over WDM for AI workloads

“Our CPX paradigm brings the proven pluggable optics ecosystem inside the package, while maintaining interoperability and low power,” said Peter Winzer, CTO of Nubis Communications.

🌐 Analysis: Nubis is carving out a pragmatic middle path between copper and full photonic interposers. By emphasizing linear optics, CPX aligns with existing SerDes roadmaps while sidestepping the power and cost penalties of retimers. With hyperscalers racing to deploy gigawatt-scale AI clusters, operators may favor CPX’s incremental approach over riskier, all-optical bets. Competitors like Lightmatter and Ayar Labs push more radical 3D photonic designs, but Nubis’ strategy may resonate with operators demanding compatibility and proven deployment models.