During a wide-ranging Quantum Computing Panel at HPE Discover 2025, executives from HPE Labs and the HPC & AI Infrastructure team emphasized that the company’s strategy is focused on building hybrid quantum-classical systems that can deliver real-world outcomes over the next decade. The panel featured Kirk Bresniker, HPE Labs Chief Architect and Fellow; Gerald Kleyn, VP of Advanced Business Development, HPC & AI Infrastructure; and Scott Shaffer, VP & Chief Technologist for Compute.

Bresniker explained that HPE’s quantum research agenda is rooted in decades of experience in scalable computing — and is shaped by lessons learned from the evolution of AI, HPC, and simulation-driven design. “We want to move beyond demonstrations and academic benchmarks toward systems that enterprises can use to solve real problems,” said Bresniker. He emphasized that quantum computing is not isolated — it must be integrated with classical compute, sensing, and networking. HPE’s vision centers on four pillars:

First, developing unified programming frameworks that abstract hardware differences across CPUs, GPUs, FPGAs, and emerging QPU architectures to make quantum-classical workflows more accessible.

Second, advancing distributed quantum simulation — using today’s HPC resources to co-design hybrid architectures and accelerate algorithm development.

Third, applying physics-based AI techniques to optimize how problems are mapped across quantum and classical resources, especially for complex multi-physics and materials problems.

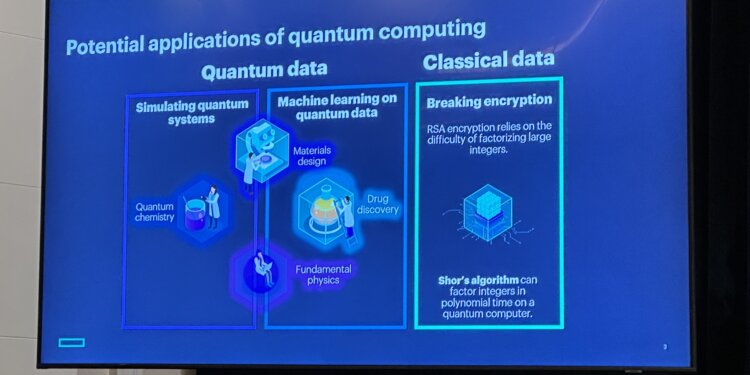

Finally, advancing post-quantum cybersecurity — embedding quantum-safe cryptography into HPE systems to protect long-term data confidentiality.

Rather than betting on a single quantum modality, HPE is building an inclusive ecosystem that spans superconducting qubits, trapped ions, photonics, and other approaches. “It’s not about picking a winner. Just as we didn’t rely on one type of accelerator for AI, we don’t expect a single modality to deliver all the value in quantum. The winning architectures will combine the best of each domain — integrated with our classical compute, storage, and networking platforms,” said Bresniker.

A key discussion point was the growing urgency around post-quantum cryptography. Shaffer noted that nation-states are already preparing for “harvest now, decrypt later” scenarios, accelerating government mandates. HPE is embedding post-quantum cryptography in its next-gen compute platforms, starting with HPE ProLiant Gen12 servers. In response to an audience question from Converge Digest’s Jim Carroll on quantum networking, Bresniker acknowledged that scalable quantum networks remain a future goal, with current work focused on minimizing classical data overhead in distributed quantum computing and adapting supercomputing fabrics for emerging QPU clusters.

- HPE’s quantum strategy emphasizes hybrid quantum-classical systems with an open, inclusive partner ecosystem

- Current focus areas: unified programming frameworks, distributed quantum simulation, AI-enhanced optimization, and post-quantum cybersecurity

- Post-quantum cryptography is now shipping in HPE Gen12 servers, with support for LMS-based encryption at the hardware root of trust

- Distributed quantum computing likely to emerge first, with supercomputing fabrics supporting low-latency, high-bandwidth classical links between QPU clusters

- Quantum networking, photonic interconnects, and multi-modality systems are active areas of HPE Labs research, with future applications anticipated

- AI tools will increasingly assist with breaking complex problems into optimal hybrid classical-quantum workloads

“As we look forward, we don’t expect one quantum modality to dominate. Instead, hybrid systems that intelligently combine classical and quantum resources will deliver the earliest enterprise and scientific benefits,” Bresniker concluded.

Q&A Highlights

Q: Given the architecture challenges of scaling quantum systems, what kind of networking will be needed to interconnect quantum clusters? Will we need fully quantum networks with entanglement distribution and quantum repeaters, or can we repurpose classical high-performance optical networking to enable scalable distributed quantum computing?

A (Kirk Bresniker): “That’s a critical question. We expect that in the near term, practical distributed quantum computing will rely on classical networking — extremely high bandwidth, ultra-low latency fabrics — to connect QPU domains. A single quantum domain may be limited to 100,000 qubits. To scale to millions of logical qubits, you’ll need to network many domains. Today we’re developing what we call ‘adaptive circuits,’ which use AI to intelligently partition workloads across domains and minimize classical data exchange. Our supercomputing fabrics are already being optimized for this.

Looking further ahead, photonic-based quantum networking — distributing entanglement between quantum systems — is very promising, and we’re actively researching it. That could enable fully quantum communication between heterogeneous QPU modalities: superconducting here, trapped ion there, photonic links in between. But that’s still several years out. Right now, the biggest wins will come from smart hybrid designs that lean on classical optical networking to connect quantum clusters — with AI helping us make those architectures as efficient as possible.”

Q: When do you expect quantum cybersecurity threats to become a pressing enterprise risk?

A (Scott Shaffer): “Timelines are accelerating. Nation-states are already pursuing ‘harvest now, decrypt later’ strategies. Governments are mandating post-quantum readiness by 2027–2029. We’re embedding post-quantum cryptography now because systems sold today will still be operating a decade from now — the threat is real and preparation can’t wait.”

Q: Are HPE’s HPC customers already experimenting with quantum systems?

A (Gerald Kleyn): “Yes — some HPC customers are already deploying small-scale quantum systems for workloads like random number generation and quantum chemistry. We partner with these customers and help match the right quantum vendors to their needs. The goal is to ensure seamless integration with today’s HPC and AI environments so quantum can start delivering scientific results.”