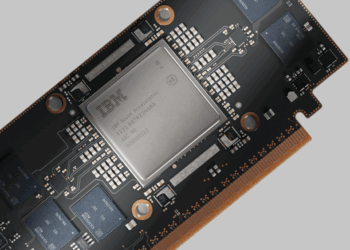

IBM has introduced the Power11 server family, a next-generation lineup engineered to meet the evolving needs of AI-driven enterprises and hybrid cloud deployments. Designed with a rearchitected Power11 processor and featuring autonomous operation capabilities, Power11 delivers up to 55% improved core performance over its predecessor, Power9, and up to 45% greater capacity across entry and mid-range systems compared to Power10.

The Power11 family spans high-end, mid-range, and entry-level systems, and includes IBM Power Virtual Server for cloud deployments, certified for RISE with SAP. Notably, Power11 debuts with built-in support for IBM’s new Spyre Accelerator, a purpose-built AI system-on-a-chip set to become generally available in Q4 2025. Power11 systems provide 99.9999% availability, zero planned downtime through autonomous patching and workload movement, and sub-minute ransomware detection via IBM Power Cyber Vault. It also offers quantum-safe cryptography, spare-core resilience, and energy efficiency gains of up to 28% with a new smart power mode.

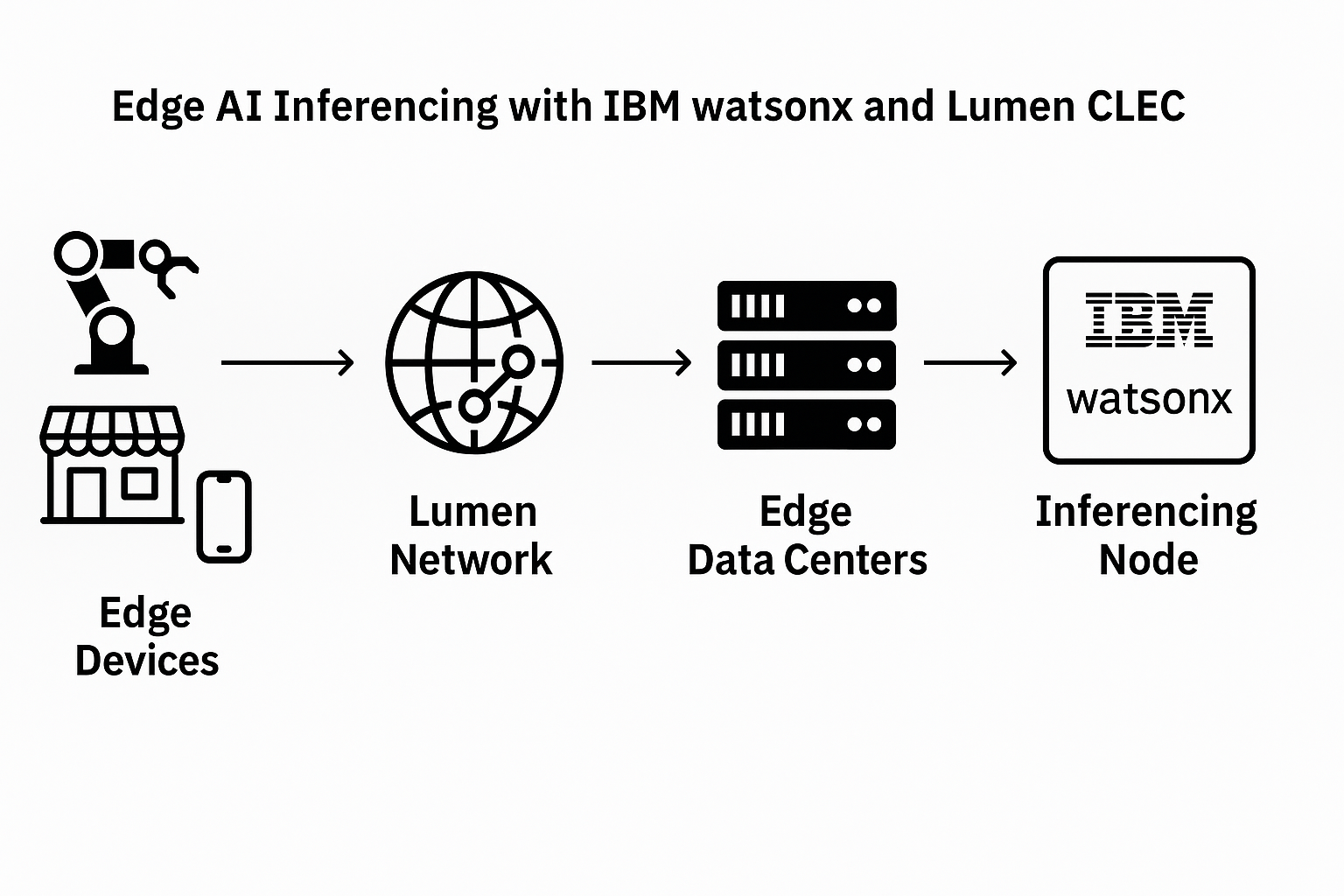

Flagship systems include the Power E1180, supporting up to 256 cores and 64TB of DDR5 memory, and designed for large-scale enterprises with demanding AI and security requirements. Midrange options such as the Power E1150 and Power S1124 scale to 120 and 60 cores respectively, while the 2U Power S1122 is tailored for space-constrained sites. Each system runs AIX, IBM i, or Linux, and integrates with Red Hat OpenShift AI and watsonx tooling to streamline application modernization and AI adoption.

- IBM Power11 launches July 25, 2025, with general availability across all form factors and IBM Cloud

- Supports IBM Spyre Accelerator for on-chip AI inference (Q4 2025 availability)

- Offers 99.9999% uptime and sub-minute ransomware detection via IBM Power Cyber Vault

- Delivers up to 2x performance-per-watt vs. comparable x86 systems

- Entry through high-end models support up to 256 cores and 64TB DDR5 memory

- Fully compatible with AIX, IBM i, Linux, and Red Hat OpenShift AI

“With Power11, clients can accelerate into the AI era with innovations tailored to their most pressing business needs,” said Tom McPherson, GM, Power Systems at IBM. “We are taking advantage of the full IBM stack to deliver hybrid cloud, AI, and automation capabilities while building on our decades-long reputation as a trustworthy hybrid infrastructure for essential workloads.”

- In August 2024, IBM disclosed key architectural advancements in its forthcoming IBM Telum II Processor and IBM Spyre Accelerator at the Hot Chips 2024 conference. These innovations are set to enhance the processing capabilities of the next-generation IBM Z mainframe systems, particularly in supporting AI models, including large language models (LLMs) and generative AI. The new processor and accelerator aim to address the growing need for scalable, energy-efficient solutions as enterprises increasingly integrate AI into production environments. The Telum II Processor features significant upgrades, including increased cache, memory capacity, and an integrated AI accelerator core. Complementing this, the IBM Spyre Accelerator, designed to work alongside the Telum II, offers scalable AI compute power, optimizing performance for complex AI models. Both chips are built on Samsung Foundry’s 5nm process, ensuring high performance and power efficiency. IBM’s continued focus on AI and advanced processing aims to provide enterprises with robust tools to manage and leverage AI-driven workloads at scale.

• Telum II Processor:

• Cores and Frequency: Eight high-performance cores running at 5.5GHz.

• Cache: 36MB L2 cache per core with a total of 360MB, a 40% increase in on-chip cache capacity.

• Virtual L4 Cache: 2.88GB per processor drawer, a 40% increase over the previous generation.

• Integrated AI Accelerator Core: Allows for low-latency, high-throughput in-transaction AI inferencing, quadrupling compute capacity per chip.

• Data Processing Unit (DPU): Accelerates IO protocols for networking and storage, with a 50% increase in IO density.

• IBM Spyre Accelerator:

• Memory: Supports up to 1TB of memory, scalable across eight cards in an IO drawer.

• Compute Cores: Each chip features 32 compute cores supporting int4, int8, fp8, and fp16 datatypes.

• Power Efficiency: Designed to consume no more than 75W per card.

• AI Model Support: Optimized for low-latency, high-throughput AI applications, designed for complex AI models and generative AI use cases.

• Manufacturing and Availability:

• Fabrication: Both chips are manufactured by Samsung Foundry on a 5nm process.

• Launch Timeline: Expected availability in 2025 with the next-generation IBM Z and IBM LinuxONE platforms.