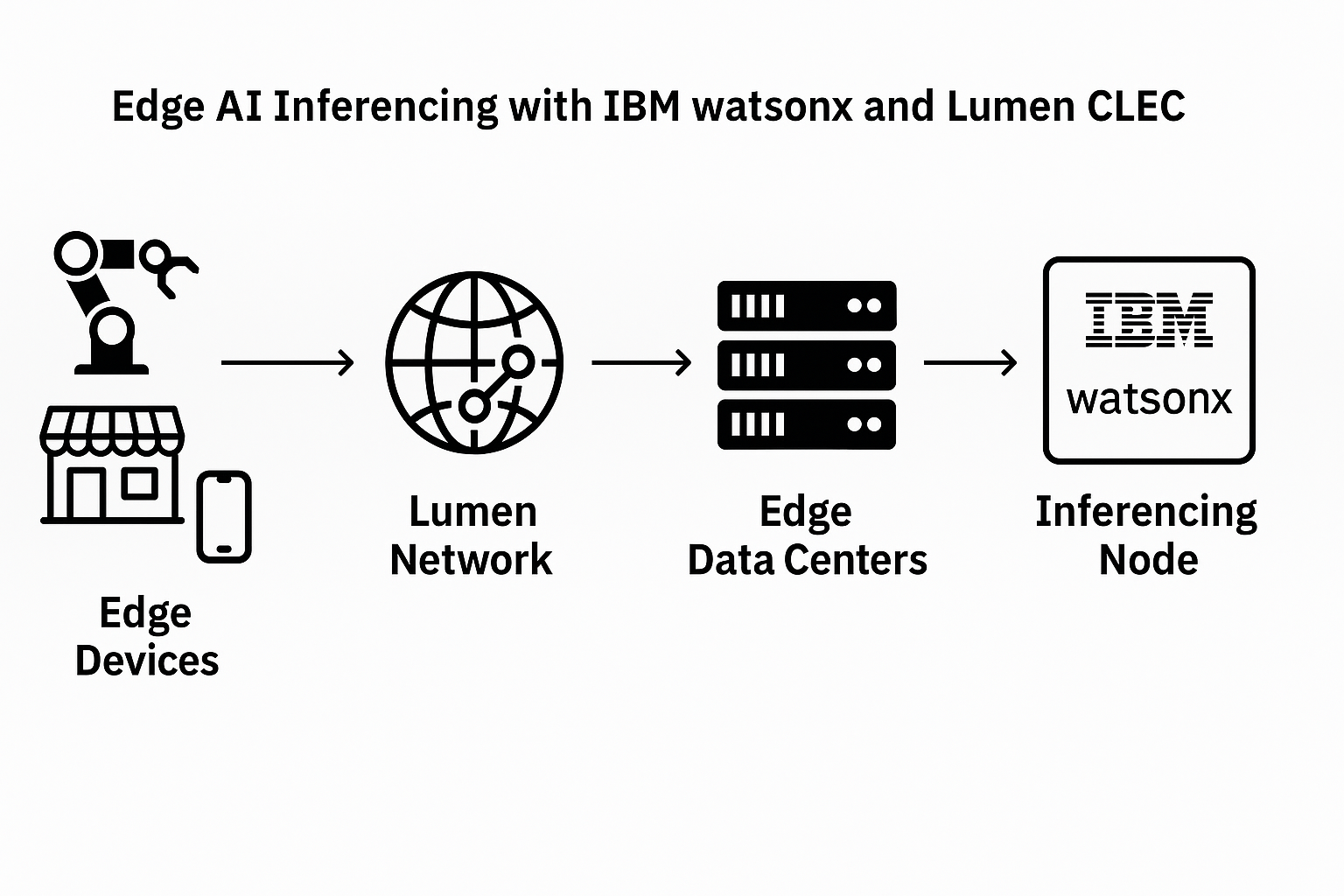

Kioxia has developed a prototype flash memory module delivering 5 terabytes of capacity and 64GB/s bandwidth, targeting edge AI and post-5G/6G mobile edge computing (MEC) applications. The company’s innovation stems from Japan’s national Post-5G Infrastructure Enhancement R&D Project, led by NEDO. The prototype addresses the long-standing trade-off between bandwidth and capacity in DRAM-based systems by leveraging a daisy-chained flash architecture and a new memory controller design.

The module incorporates a 128Gbps PAM4 high-speed transceiver and flash performance-boosting technologies, including low-latency prefetching and advanced signaling techniques. This enables each module to maintain high throughput even as capacity scales, while keeping power consumption below 40 watts. The host interface is based on PCIe 6.0, using eight lanes at 64Gbps. These specifications position the module as a strong candidate for high-performance edge servers needed to support generative AI, IoT, and big data analytics at the network edge.

Kioxia’s architectural innovations include serial daisy-chain connections instead of traditional bus topologies, enabling linear scalability. The company also implemented low-power signaling between controller and memory chips, achieving 4.0Gbps flash interface performance and mitigating latency through distortion correction and prefetch enhancements.

- 5TB capacity and 64GB/s bandwidth flash memory module

- 128Gbps PAM4 transceivers with daisy-chain topology

- Flash interface performance increased to 4.0Gbps

- PCIe 6.0 (8 lanes) used as host interface

- Power consumption under 40W per module

- Target use cases: edge AI, MEC, IoT, generative AI

“This prototype represents a major advancement in large-capacity, high-bandwidth memory modules designed for edge computing in the post-5G era,” said a spokesperson from Kioxia.

🌐 Analysis: This marks a strategic push by Kioxia into edge AI infrastructure, where memory bandwidth and capacity are increasingly critical. The use of flash memory as an alternative to DRAM opens new pathways for low-power, high-density AI systems outside of hyperscale data centers. Kioxia’s work aligns with broader industry efforts to reduce latency and power overhead at the network edge. Competitors like Samsung, Micron, and SK hynix are also pursuing similar strategies, but Kioxia’s early PCIe 6.0-based implementation could give it an edge in emerging MEC deployments.

🌐 We’re tracking the latest developments in semiconductors. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/