Lambda announced a multibillion-dollar agreement with Microsoft to deploy large-scale AI infrastructure powered by tens of thousands of NVIDIA GPUs, including NVIDIA’s new GB300 NVL72 systems. The deal underscores Microsoft’s ongoing expansion of cloud-based accelerated computing resources to meet surging enterprise and AI assistant workloads.

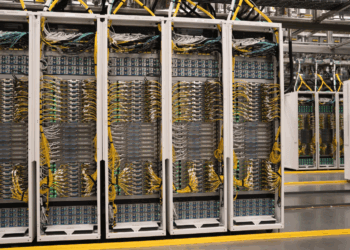

The collaboration extends a long-term partnership between the two companies, now entering its most ambitious phase with deployments spanning multiple hyperscale data centers. Lambda, known for building “gigawatt-scale AI factories,” will provide compute infrastructure designed for both model training and inference at unprecedented scale. The initiative reflects escalating demand for GPU-based supercomputing driven by generative and agentic AI applications.

“It’s great to watch the Microsoft and Lambda teams working together to deploy these massive AI supercomputers,” said Stephen Balaban, CEO of Lambda. “We’ve been working with Microsoft for more than eight years, and this is a phenomenal next step in our relationship.”

• Multibillion-dollar AI infrastructure agreement between Microsoft and Lambda

• Deployment includes NVIDIA GB300 NVL72 systems at hyperscale

• Expands access to accelerated compute for enterprise AI and assistant workloads

• Builds on Lambda’s strategy to deploy gigawatt-scale AI factories across North America

• Lambda founded in 2012; mission to make compute as ubiquitous as electricity

🌐 Analysis:

Founded in 2012 by AI researchers Stephen Balaban and Michael Balaban, Lambda has evolved from an AI workstation provider into a key player in the global race to supply compute infrastructure for large-scale AI model training. The company operates what it calls “AI factories”—massive data centers purpose-built for training and inference workloads—and is among the few independent U.S. players competing with hyperscalers in this domain. Lambda’s business model combines cloud services, on-premise systems, and colocation to give enterprises flexible access to high-performance GPU clusters.

In recent years, Lambda has expanded aggressively with the support of major investors, including Andreessen Horowitz, Crescent Cove, and the U.S. Innovation Fund. The company’s focus on sovereign and domestically operated compute infrastructure has attracted interest from both commercial and government sectors seeking alternatives to global hyperscalers. Lambda’s partnerships with NVIDIA and Dell Technologies further strengthen its hardware supply chain and system integration capabilities.

The Microsoft deal signals Lambda’s entry into a new operational tier—supporting deployments that rival those of hyperscalers like AWS and Google Cloud. It follows Microsoft’s growing reliance on external GPU cloud partners such as CoreWeave, IREN, and Cerebras to meet unprecedented AI demand. For NVIDIA, the agreement marks another major Blackwell platform rollout, expanding the GB300 NVL72 footprint across North America and reinforcing the industry’s shift toward vertically integrated, high-density AI compute campuses.

🌐 We’re tracking the latest developments in AI infrastructure. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/