FuriosaAI’s RNGD accelerator has demonstrated a 2.25x improvement in performance per watt for large language model (LLM) inference compared to traditional GPU-based systems, following rigorous validation with LG AI Research’s EXAONE models. The successful testing and integration of RNGD now positions FuriosaAI to supply RNGD-powered servers to enterprises leveraging EXAONE in sectors including electronics, finance, telecom, and biotech. While not yet deployed in production, LG AI Research confirmed the strong results and plans broader deployment.

The tests showed RNGD-powered systems delivering 60 tokens/second on the EXAONE 3.5 32B model with a 4K context window and 50 tokens/second with a 32K context window, using a four-card RNGD server. In power-constrained environments, a rack of RNGD servers can deliver 3.75x more token generation than equivalent GPU racks. LG AI Research conducted the evaluation over two years, benchmarking RNGD on both 7.8B and 32B parameter models, and noted the accelerator’s ability to reduce total cost of ownership while meeting low-latency service needs.

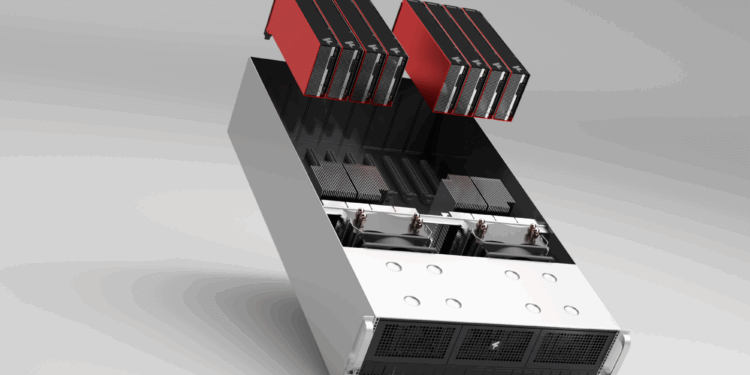

RNGD is built on FuriosaAI’s proprietary Tensor Contraction Processor architecture, delivering up to 512 TFLOPS FP8 performance at 180W TDP. LG AI Research installed RNGD servers at its Koreit Tower data center and scaled from single to eight-card configurations, using optimized tensor parallelism, compiler techniques, and a vLLM-compatible serving framework. The collaboration will now expand with support for EXAONE 4.0, new software features, and plans to extend the EXAONE-powered ChatEXAONE AI agent to external clients.

- RNGD outperforms GPUs by 2.25x in LLM inference performance per watt

- RNGD rack delivers 3.75x more tokens vs. GPU rack within same power budget

- Server runs EXAONE 3.5 32B at 60 tokens/sec (4K) and 50 tokens/sec (32K)

- Integrated stack includes OpenAI-compatible API, Prometheus metrics, Kubernetes support

- Future roadmap includes support for EXAONE 4.0 and agentic AI applications

“After extensively testing a wide range of options, we found RNGD to be a highly effective solution for deploying EXAONE models,” said Kijeong Jeon, Product Unit leader at LG AI Research. “RNGD provides a compelling combination of benefits: excellent real-world performance, a dramatic reduction in our total cost of ownership, and a surprisingly straightforward integration.”

🌐 Why it Matters: As sovereign AI becomes a strategic priority, enterprises are seeking alternatives to GPU-based inference systems. FuriosaAI’s success with LG AI Research shows the viability of custom accelerators in high-performance GenAI applications—offering reduced energy consumption, better economics, and greater control over infrastructure.

- Founded in 2017, FuriosaAI is a South Korea-based semiconductor startup developing AI inference accelerators optimized for performance, efficiency, and scalability. The company is headquartered in Seoul, with R&D and operations in both South Korea and Silicon Valley. FuriosaAI’s mission is to make AI computing sustainable by delivering high-performance, energy-efficient solutions that enable enterprises to build and deploy advanced models at scale. The leadership team includes co-founder and CEO June Paik, formerly an engineer at Samsung Electronics and AMD with a master’s degree from the Georgia Institute of Technology. FuriosaAI’s core technology centers on its proprietary Tensor Contraction Processor (TCP) architecture, which powers its RNGD accelerator. Major milestones include a $72 million Series B funding round in 2021 led by South Korea’s top tech investors, early collaborations with leading hyperscalers and research institutions, and validation with LG AI Research. 10 15 16 19 21 23