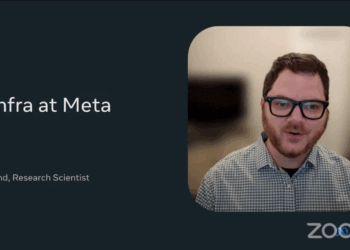

At its NETWORKING @SCALE 2024 event in Santa Clara, California, Meta engineers Shivkumar Chandrashekhar and Lee Hetherington outlined how the company’s global edge infrastructure is evolving to meet the growing demands of AI, the metaverse, and real-time applications. Meta’s edge infrastructure, which operates in over 200 countries and connects with hundreds of ISPs, has been crucial in delivering content and services with an average global latency of less than 40 milliseconds. However, with the shift towards AI and the metaverse, new challenges have emerged, especially regarding the management of dynamic, non-cacheable content and the need for low-latency compute capabilities at the edge.

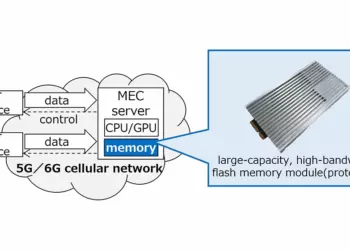

Meta’s edge hierarchy includes a vast range of infrastructure, from data centers to points of presence (PoPs), designed to serve the diverse demands of its 3 billion users. The introduction of AI workloads and real-time, compute-intensive applications like virtual reality (VR), gaming, and conversational AI have required Meta to rethink its infrastructure. As they deploy GPU-powered compute clusters closer to users, Meta is also developing smaller edge deployments, called “Elastic Edge Small,” aimed at underserved regions, offering compute capabilities and low-latency services without overwhelming infrastructure.

Managing this globally distributed edge environment involves solving complex challenges related to workload placement, security, and fleet management. Meta has implemented advanced scheduling systems to dynamically match user requests with appropriate compute clusters, while also addressing security risks from untrusted environments near the public internet. As Meta continues to expand its edge, they are introducing new machine types to support AI inferencing and graphics processing, focusing on real-time applications and future growth in AI use cases.

Key Points:

• Meta’s edge infrastructure operates in 200+ countries, connecting hundreds of ISPs, and maintaining global latency below 40 milliseconds.

• The shift to AI and the metaverse introduces new challenges, particularly with dynamic, non-cacheable content.

• Edge infrastructure includes data centers, PoPs, and 7,000+ clusters for content delivery, security services, and compute workloads.

• Meta is deploying smaller edge clusters, “Elastic Edge Small,” in underserved regions to bring low-latency compute closer to users.

• AI-generated content and real-time applications like VR and gaming demand new GPU-powered edge compute clusters.

• Meta is evolving its edge to support more diverse workloads, including AI, real-time inferencing, and metaverse applications.

• Scheduling systems optimize user requests by routing them to appropriate clusters based on latency, resource availability, and workload constraints.

• Security challenges arise from hosting first-party and third-party applications at the edge, necessitating strict isolation and authentication measures.

• Fleet management for more than 100,000 GPUs involves dynamic scaling, demand forecasting, and specialized configurations for different workloads.

• Meta’s hardware at the edge has evolved from CDN-focused machines to GPU-heavy clusters designed for AI and real-time processing.

Video replay will be posted on the Networking @Scale site