NTT proposed a framework for distributed processing between suburban data centers and AI constellations to address the energy and scalability challenges of large language models (LLMs) at the ITU-T CxO Roundtable on December 9, 2024. Senior executives at the meeting, hosted by the International Telecommunication Union (ITU-T) in Dubai, endorsed NTT’s proposal and emphasized the importance of developing de jure standards to implement the initiative globally.

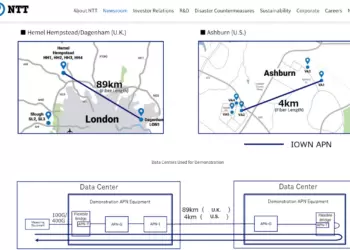

NTT’s approach aims to create a distributed ICT platform that optimizes high-speed, low-latency, and energy-efficient operations to support the growing demands of LLMs and massive AI. The proposal integrates standards from ITU-T and ongoing work at the IOWN Global Forum, focusing on advanced technologies like APN and DCI. With global recognition of the need for standardized frameworks, this initiative is positioned to help mitigate AI’s environmental impact while ensuring scalable infrastructure for future advancements.

“Global collaboration on infrastructure for massive AI is critical,” said Akira Shimada, President of NTT. Following the roundtable’s agreements, NTT will focus on accelerating research and development of ICT infrastructure and contribute to international standards for distributed processing, paving the way for widespread adoption of sustainable AI technologies.

• Meeting: ITU-T CxO Roundtable held in Dubai on December 9, 2024.

• Proposal: Distributed processing between suburban data centers and AI constellations to support large-scale AI and LLMs.

• Standards: Focus on de jure standards under ITU-T and integration with IOWN Global Forum specifications for APN and DCI technologies.

• Objective: Create energy-efficient, high-performance ICT platforms to mitigate AI training costs and environmental impacts.

• Next Steps: Accelerate R&D on ICT infrastructure and collaborate on international standardization.

“Global collaboration on infrastructure for massive AI is critical,” said Akira Shimada, President of NTT. “We are committed to developing solutions that address AI’s environmental and scalability challenges through standardized, distributed processing frameworks.”