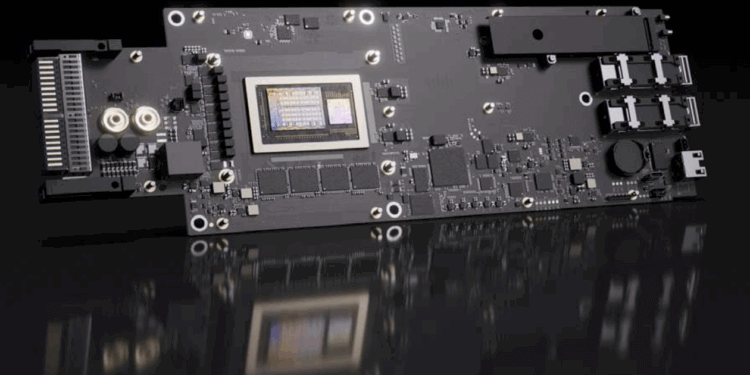

At GTC Washington, D.C., NVIDIA introduced the BlueField-4 Data Processing Unit (DPU), a purpose-built accelerator for gigascale AI factories. The new chip delivers up to 800 Gbps of network throughput and integrates 64 Arm Neoverse V2 cores, a PCIe Gen 6 ×16 host interface, and a 128 GB LPDDR5 memory subsystem. It fuses compute, storage, and security acceleration within a single platform designed to handle trillion-token workloads.

BlueField-4 combines the NVIDIA Grace CPU with ConnectX-9 SuperNIC networking to provide six times more compute power than BlueField-3, enabling AI factories up to four times larger. It supports both Ethernet and InfiniBand, operating at up to 200 G SerDes per lane, with port options scaling to eight splits per device. The DPU introduces native service function chaining, programmable data-path acceleration, and real-time AI threat detection, all powered by NVIDIA’s DOCA microservices framework for software-defined infrastructure.

Security is anchored by the Advanced Secure Trusted Resource Architecture, which provides zero-trust tenant isolation, secure boot with hardware root of trust, encrypted firmware updates, and device attestation via SPDM 1.1. The platform includes built-in cryptographic engines for AES-GCM 128/256 and AES-XTS 256/512, plus IPsec and TLS acceleration for data-in-motion. BlueField-4 also integrates 512 GB on-board SSD, 114 MB L3 cache, and support for GPUDirect RDMA and GPUDirect Storage for low-latency data access.

Key Specifications

- Throughput: Up to 800 Gbps (200 G SerDes per lane; supports Ethernet and InfiniBand)

- Compute: 64 × Arm Neoverse V2 cores; 114 MB shared L3 cache

- Memory: 128 GB LPDDR5 DRAM; 512 GB on-board SSD

- Interface: PCIe Gen 6 ×16 with SocketDirect support

- Acceleration Engines: 16 programmable data-path cores (256 threads)

- Storage: BlueField SNAP elastic block storage with NVMe-oF and NVMe/TCP acceleration

- Security: AES-GCM 128/256 and AES-XTS 256/512 crypto; secure boot; device attestation

- Networking: RDMA / RoCE v2, Spectrum-X Ethernet, in-network computing, MPI accelerations

- Management: Integrated BMC with 1 GbE OOB port; Redfish and MCTP management protocols

- Form Factors: PCIe and VR NVL144

Adoption Across the Ecosystem

- Server and Storage Builders: Cisco, Dell, HPE, IBM, Lenovo, Supermicro, VAST Data, WEKA, and DDN plan BlueField-4 integration in next-generation AI storage and compute systems.

- Cybersecurity Partners: Palo Alto Networks, Check Point, F5, Cisco, and Trend Micro are developing real-time AI runtime security and zero-trust protection.

- Cloud and AI Providers: CoreWeave, Crusoe, Lambda, Oracle Cloud Infrastructure, Akamai, Together.ai, and xAI are adopting DOCA to accelerate networking and enhance multi-tenant security.

- Infrastructure Software Vendors: Red Hat, Canonical, SUSE, Nutanix, Mirantis, Rafay, and Spectro Cloud integrating BlueField-4 into AI-ready clouds.

- Systems Integrators: Accenture, Deloitte, and World Wide Technology preparing enterprise and government deployments.

- Availability: Early access expected in 2026 as part of NVIDIA Vera Rubin AI systems.

“It’s purpose-built as the end-to-end engine for a new class of AI storage platforms, bringing acceleration to the foundation of AI data pipelines for efficient processing and breakthrough performance at scale,” said Itay Ozery, Senior Director of Product Marketing for Data Center at NVIDIA.

🌐 Analysis: BlueField-4 strengthens NVIDIA’s full-stack approach to AI infrastructure, extending its control from GPUs to DPUs and networking. With 800 Gbps links and Grace CPU integration, NVIDIA is positioning itself at the center of the AI data-center stack—challenging AMD Pensando and Intel’s IPU programs as hyperscalers scale out multi-tenant AI fabrics.