NVIDIA introduced Spectrum-XGS Ethernet, unveiling a “scale-across” architecture designed to interconnect distributed data centers into unified, giga-scale AI super-factories. The new capability expands the Spectrum-X Ethernet platform, adding a third dimension of scaling — beyond scale-up and scale-out — to overcome the physical and power limits of individual facilities. By eliminating bottlenecks associated with standard Ethernet, Spectrum-XGS delivers predictable performance for multi-GPU and multi-node AI clusters that extend across cities, nations, or continents.

Spectrum-XGS features distance-aware congestion control, precision latency management, and end-to-end telemetry, enabling geographically distributed data centers to function as a single AI factory. NVIDIA reports that the system nearly doubles the performance of its Collective Communications Library, accelerating GPU-to-GPU communications across long distances. CoreWeave will be among the first adopters, integrating Spectrum-XGS to unify its AI data centers into one supercomputing fabric.

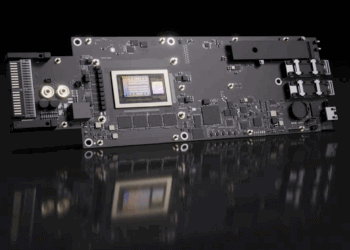

The Spectrum-X platform delivers 1.6x higher bandwidth density than standard Ethernet, powered by Spectrum-X switches and ConnectX-8 SuperNICs. This announcement builds on NVIDIA’s string of networking innovations, including Spectrum-X and Quantum-X silicon photonics switches, all aimed at enabling AI factories with millions of GPUs while reducing energy and operating costs.

Hot Chips 2025, set for August 24–26 at Stanford University, will feature a wide range of innovations from across the semiconductor and systems industry. NVIDIA will use the forum to highlight how its networking, GPU, and software platforms converge to enable AI inference at every scale. Company executives and engineers plan to present advances in rack-scale connectivity, distributed networking, and GPU-accelerated inference across five sessions and tutorials.

- NVIDIA ConnectX-8 SuperNICs for high-speed, low-latency, multi-GPU communication at rack and data-center scale (Idan Burstein)

- Neural rendering and inference performance leaps with the Blackwell architecture, including the GeForce RTX 5090 GPU and DLSS 4 (Marc Blackstein)

- Co-packaged optics (CPO) switches and Spectrum-XGS Ethernet for unifying distributed data centers into giga-scale factories (Gilad Shainer)

- The NVIDIA GB10 Superchip powering the compact DGX Spark desktop AI supercomputer (Andi Skende)

NVIDIA’s networking stack is presented as the “nervous system” of AI factories. NVLink and NVLink Switch deliver scale-up GPU connectivity within and across servers. Spectrum-X Ethernet provides scale-out fabric across data centers, while Spectrum-XGS enables scale-across connections between multiple facilities. CPO switches bring integrated silicon photonics to the networking layer, pushing efficiency and bandwidth for gigawatt-scale AI infrastructure.

The company will also highlight the GB200 NVL72 rack-scale system, which integrates 36 GB200 Superchips — each combining two B200 GPUs and a Grace CPU — interconnected by NVLink Switch for 130 Tbps of GPU communication bandwidth. At the desktop tier, DGX Spark powered by the GB10 Superchip delivers Blackwell-class inference performance in a compact form factor, supporting NVFP4 precision to optimize LLM inference.

CUDA remains the unifying layer across NVIDIA’s ecosystem. With hundreds of millions of GPUs globally, CUDA supports model deployment from cloud AI factories to workstations. NVIDIA also accelerates open-source frameworks including PyTorch, vLLM, and SGLang, alongside its own libraries such as TensorRT-LLM, Cutlass, and the Collective Communications Library. NVIDIA NIM microservices extend this reach further by offering flexible deployment of popular models like gpt-oss and Llama 4.

- Spectrum-XGS Ethernet adds “scale-across” to NVIDIA’s AI infrastructure strategy

- Delivers predictable performance across distributed data centers with advanced congestion and latency controls

- CoreWeave to be first adopter, unifying its AI facilities into a single supercomputer

- At Hot Chips 2025, NVIDIA to detail Blackwell GPUs, ConnectX-8 SuperNICs, CPO switches, and DGX Spark systems

- CUDA ecosystem integrates with open frameworks and accelerates inference across hundreds of millions of GPUs

“The AI industrial revolution is here, and giant-scale AI factories are the essential infrastructure,” said Jensen Huang, founder and CEO of NVIDIA. “With NVIDIA Spectrum-XGS Ethernet, we add scale-across to scale-up and scale-out capabilities to link data centers across cities, nations and continents into vast, giga-scale AI super-factories.”

🌐 Analysis: NVIDIA is using Spectrum-XGS to position Ethernet as the backbone of distributed AI infrastructure, complementing its NVLink and Quantum-X interconnects. The strategy reflects a shift toward multi-site architectures as single-campus data centers hit power and land constraints. Competitors including Broadcom, Cisco, and Marvell are also investing heavily in advanced optics and AI fabrics, but NVIDIA’s integration of networking with GPUs and CUDA may give it an advantage in offering a tightly coupled platform. CoreWeave’s deployment signals how GPU cloud providers are eager to extend scale beyond single sites, while NVIDIA’s Hot Chips presentations highlight its intent to dominate AI infrastructure from the rack to the continent.

NVIDIA’s networking heritage is rooted in InfiniBand, which it gained through the 2020 Mellanox acquisition. The company continues to invest heavily in its Quantum InfiniBand line, now in its fourth generation (Quantum-2) with roadmaps pointing toward Quantum-X and beyond. These switches deliver up to 400G per port today with plans for 800G-class throughput, combined with advanced congestion control, adaptive routing, and in-network computing acceleration for AI training workloads. InfiniBand remains the interconnect of choice for the most latency-sensitive supercomputers and exascale projects, while Spectrum-XGS Ethernet is designed for hyperscale cloud AI deployments where cost, ecosystem compatibility, and geographic distribution drive adoption. By supporting both InfiniBand and Ethernet fabrics, NVIDIA maintains flexibility across HPC, AI research, and commercial cloud environments.

Broadcom, meanwhile, is pushing its own vision with SUE (Scale-Up Ethernet), announced earlier this year. SUE targets similar pain points in AI networking by optimizing Ethernet for ultra-low latency and deterministic performance at scale. Broadcom’s approach leverages its dominance in switching silicon and optics, aiming to preserve Ethernet’s economics while narrowing the performance gap with InfiniBand. The competitive dynamic between NVIDIA’s Spectrum-XGS and Broadcom’s SUE will shape how hyperscalers architect distributed AI clusters in the coming years.

🌐 We’re tracking the latest developments in networking silicon. Follow our ongoing coverage at: https://convergedigest.com/category/semiconductors/

NVIDIA Debuts Spectrum-X and Quantum-X Photonics Switches

NVIDIA unveiled its next-generation silicon photonics switches—Spectrum-X Photonics Ethernet and Quantum-X Photonics InfiniBand—designed to scale AI factories to connect millions of GPUs while cutting energy consumption and improving performance. The new co-packaged optics (CPO) switches mark a major leap forward for AI data center networks, offering 3.5x power efficiency, 10x higher resiliency, and faster deployment compared to traditional network architectures. These photonic switches integrate optical components directly into switch silicon, reducing the need for external lasers and delivering significant space and power savings.

The Spectrum-X Photonics platform provides Ethernet-based switching with configurations of up to 400 Tbps of total bandwidth across 2,048 ports, while the Quantum-X Photonics platform brings liquid-cooled InfiniBand switching with 144 ports of 800 Gbps to support high-performance AI compute fabrics. The switches leverage microring resonator modulator (MRM) technology and are manufactured using TSMC’s advanced 3D COUPE process. NVIDIA’s solution is optimized for next-generation AI factories — ultra-large-scale data centers supporting generative AI, large language models, and other compute-intensive workloads — as organizations push to build multi-tenant AI supercomputers with enhanced energy efficiency.

Backed by ecosystem partners including TSMC, Coherent, Corning, Foxconn, Lumentum, and SENKO, the new switches integrate fiber directly into the switch package, eliminating traditional transceivers to reduce complexity and footprint. The Spectrum-X Photonics Ethernet switch operates at 1.6 Tbps per port and scales to 512 ports, while the Quantum-X Photonics InfiniBand switch offers liquid-cooled photonics with similar speeds. According to Jensen Huang, NVIDIA’s CEO, these innovations could cut up to 60 megawatts of power per AI data center—equivalent to eliminating 100 Rubin Ultra racks—providing a significant operational and sustainability advantage.

The photonic switches will integrate with NVIDIA’s broader AI networking stack, including ConnectX-8 SuperNICs, which link Blackwell Ultra GPUs at 800 Gbps, and BlueField-3 DPUs for secure, accelerated multi-tenant environments. Building on Spectrum-X’s success with AI supercomputers such as Colossus, NVIDIA plans to use this technology to scale future AI factories to hundreds of thousands of GPUs.