SAN JOSE — October 13, 2025 — At the OCP Global Summit, Ian Buck, NVIDIA’s Vice President of Hyperscale and HPC, presented a roadmap for the giga-scale era of AI, emphasizing that the company’s focus now spans far beyond GPUs to encompass full data-center systems — including racks, interconnects, cooling, and power. He described next-generation data centers as “AI factories” — dynamic, self-optimizing assets that become smarter and more efficient over time.

Buck highlighted NVIDIA’s deep collaboration with the Open Compute Project (OCP) as key to this transformation. He cited major performance gains from recent deployments — including a 5× boost in GPT/OSS inference that cut token costs from 11 ¢ to 2 ¢ — demonstrating how end-to-end optimization across chips, racks, and networks translates directly to efficiency and profitability.

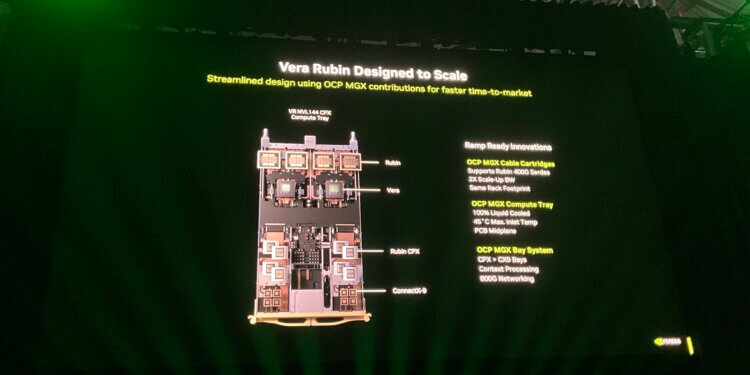

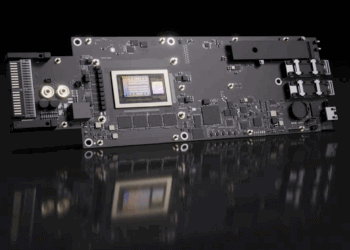

Looking ahead, NVIDIA will introduce its Vera Rubin architecture in 2026, pairing a new CPX context processor with next-generation GPUs to deliver up to 8× inference throughput and 400 G-scale networking. Rubin will integrate into OCP’s MGX rack platform, featuring liquid cooling, 500 A bus bars, and improved modular power delivery. Buck also previewed Kyber (2027) — scaling to 576 GPUs per rack and adopting 800 V DC power distribution for even higher energy efficiency.

Buck announced new networking and ecosystem initiatives under Spectrum-X, including a new OCP-contributed switch (Minipack 3N) and Spectrum XGX, an inter-data-center fabric for training across geographically distributed AI clusters. Through NVL Fusion, NVIDIA is opening its NVLink fabric to third-party CPUs and accelerators — with Intel, Fujitsu, Astera Labs, Samsung, Marvell, MediaTek, and Alechip among the early partners — broadening OCP’s open ecosystem for heterogeneous AI infrastructure.

Key Announcements and Highlights

- Giga-Scale Era: NVIDIA defines the new phase of AI infrastructure as multi-gigawatt, multi-campus systems co-designed across compute, network, power, and cooling.

- Performance Gains: B200 data centers deliver 5× faster GPT/OSS inference at one-fifth the cost; GB200 NVL72 achieves 15× gains on DeepSea models.

- Vera Rubin (2026): Dual-processor architecture (CPX + GPU) for 8× inference throughput; 400 G networking; 260 TB/s GPU bandwidth; OCP MGX compatible.

- CPX Context Processor: Optimized for million-token context windows in content creation and code generation applications.

- MGX Rack Enhancements: 45 °C air inlet support; liquid cooling; 500 A bus bars; modular power domains; automatic transfer switching.

- NVL Fusion: Triplet IP interconnect enabling non-NVIDIA CPUs and accelerators to join NVLink fabrics; partners include Intel and Fujitsu (Monaka).

- Custom Silicon Ecosystem: Collaborations with Astera Labs, Samsung, Marvell, MediaTek, and Alechip for accelerator integration.

- Spectrum-X Networking: New OCP switch (Minipack 3N) built on FBOSS; AI-optimized Ethernet with ~95 % effective bandwidth.

- Spectrum XGX Fabric: Connects multiple AI data centers for training at million-GPU scale.

- Real-World Deployments: Microsoft’s Fairwater and Oracle’s Stargate supercomputers built on Spectrum-X and OCP open designs.

- Kyber (2027): Scales to 576 GPUs per rack; introduces 800 V DC power infrastructure.

- Energy Efficiency Focus: Rack designs reduce cooling demand without new chillers; higher-voltage power improves PUE for AI campuses.

- OCP Ecosystem Wall: Showcases MGX modules, NVL Fusion links, and Spectrum-X switches for hands-on demonstration at the Summit.

“We’re now building AI infrastructure that doesn’t just compute — it learns, adapts, and appreciates in value,” said Ian Buck. “The OCP community is essential to turning these engineering marvels into open, scalable AI factories for the world.”