The Open Compute Project (OCP) Foundation has released an open letter urging hyperscalers, data center operators, and technology providers to collaborate on a unified framework for AI data center infrastructure. Spearheaded by Google, Meta, and Microsoft, the letter highlights that the ongoing AI boom—contributing between $355 billion and $727 billion annually to U.S. GDP—has outpaced existing data center design and deployment models. It calls for a coordinated effort to enable faster, more flexible buildouts of GPU-heavy AI facilities through common interfaces and shared specifications.

The OCP initiative outlines four foundational areas for immediate industry focus: power, cooling, mechanical design, and telemetry. With AI racks expected to exceed 500 kW, the group advocates for standardized interfaces for high-voltage DC power systems and long-term adoption of solid-state transformers (SSTs). In cooling, it calls for standardized screening and commissioning processes for coolant distribution units (CDUs), ultimately leading to a common design baseline. Mechanically, the group seeks shared standards for rack dimensions, aisle widths, and floor loading to accommodate dense AI systems. Finally, OCP is pushing for a unified telemetry protocol to securely monitor and control complex, disaggregated infrastructure components.

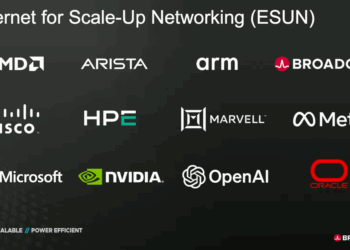

More than a dozen signatories—including AMD, NVIDIA, Intel, Arista, CoreWeave, Oracle, and Vantage Data Centers—have endorsed the initiative. OCP plans to use its collaborative framework to organize cross-industry workstreams that can reduce deployment friction and enable interoperability between facilities and equipment vendors. “We have a choice: continue with fragmentation or come together to build a more open and innovative future,” the letter states.

🌐 Analysis: The OCP’s call reflects a growing recognition that AI infrastructure now represents a critical bottleneck in global compute expansion. With GPU systems exceeding traditional power and cooling limits, aligning on facility standards could determine how quickly hyperscalers can scale future AI clusters. The move also positions OCP as a coordination hub alongside groups like the Ultra Ethernet Consortium and TIA’s new Data Center Quality initiative, signaling convergence across compute, power, and cooling domains ahead of mass-scale AI data center construction.