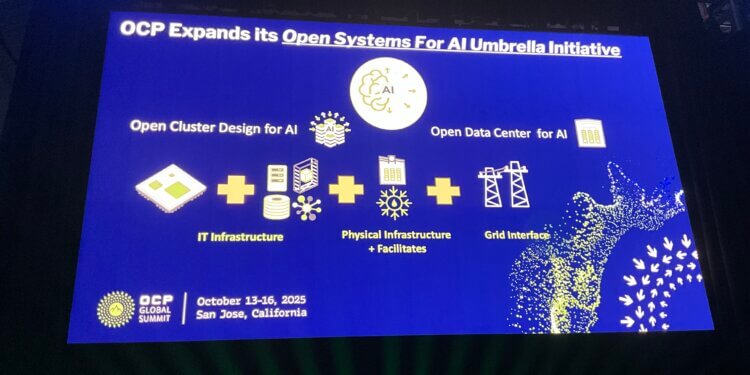

The Open Compute Project Foundation (OCP) unveiled its new Open Data Center for AI Strategic Initiative—a major expansion of its Open Systems for AI program—to tackle the physical infrastructure challenges of large-scale AI deployments. Announced ahead of the OCP Global Summit 2025, the new initiative focuses on power, cooling, mechanical systems, and management telemetry to support the surge of high-density AI clusters. The initiative is backed by Google, Meta, and Microsoft, who co-authored a call-to-action letter urging the industry to collaborate on open data center standards.

The new program’s goal is to create standardized facility-level frameworks that enable flexible deployment of advanced AI systems—allowing data centers to accommodate diverse AI workloads without the need for site-specific customization. OCP leaders say current siloed approaches to power and cooling design slow down AI rollouts and increase costs. The initiative builds on several ongoing OCP projects, including Diablo 400 (a 400VDC power-rack “sidecar” specification by Google, Meta, and Microsoft), Deschutes (a 2MW liquid cooling CDU specification from Google), and Clemente (a 1RU compute tray for NVIDIA GB300 HPMs developed by Meta).

“The OCP Community’s vision of the open data center ecosystem continues to enable solving the challenges of building at-scale AI clusters,” said George Tchaparian, CEO of the OCP Foundation. “Our role in fostering open, standardized, sustainable, and scalable infrastructure is increasingly vital to managing AI’s explosive growth while improving time-to-market and sustainability.”

• New Open Data Center for AI initiative extends OCP’s Open Systems for AI umbrella

• Backed by Google, Meta, and Microsoft to align power, cooling, and telemetry standards

• Builds on key OCP projects: Diablo 400 power rack, Deschutes CDU, and Clemente compute tray

• Aims to enable fungible, high-density AI infrastructure for hyperscalers, neoclouds, and colos

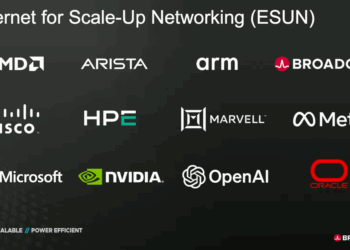

• Includes contributions from AMD, Arm, and NVIDIA through OCP technical workstreams

“Creating agile, fungible data centers that can adapt to rapid innovation requires a modular, standards-based approach,” said Partha Ranganathan, Google Fellow and Vice President. “OCP’s new initiative provides the essential collaborative venue to define these specifications.”

🌐 Analysis: OCP’s Open Data Center for AI initiative marks a pivotal shift toward standardizing the facility layer of AI infrastructure—an area historically dominated by proprietary designs. By unifying hyperscalers, chipmakers, and colocation providers, OCP aims to accelerate buildout of sustainable, interoperable AI campuses. This effort complements recent OCP contributions such as ESUN (Ethernet for Scale-Up Networking) and Open Chiplet Economy, together forming a comprehensive open framework spanning silicon-to-site architecture for the AI era.