At the 2025 Open Compute Project (OCP) Global Summit, Broadcom’s Ram Velaga, Senior Vice President and General Manager of the Core Switching Group, made a compelling case for Ethernet as the foundation of hyperscale AI infrastructure. He highlighted the scale of the current data center boom—26 GW of capacity announced in just four weeks—translating to roughly 15 million XPUs, a mix of GPUs, TPUs, and custom accelerators. Velaga emphasized that the exponential growth of AI workloads makes networking the central element of distributed computing: “The network is the computer.”

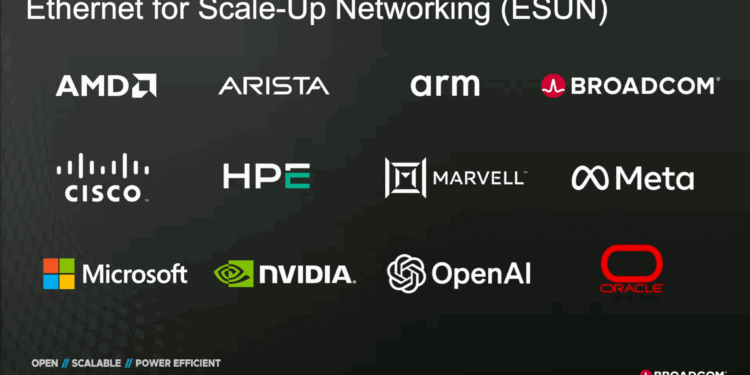

Velaga reaffirmed that Ethernet remains the only open, economical, and scalable interconnect technology capable of handling the three fundamental AI infrastructure domains: scale-up (intra-rack XPU-to-XPU), scale-out (inter-rack cluster), and scale-across (data-center-to-data-center). He introduced the Ethernet for Scale-Up Networking (ESUN)initiative, launched through OCP in collaboration with AMD, Arm, Arista, Cisco, HPE, Marvell, Meta, Microsoft, NVIDIA, OpenAI, and Oracle. ESUN defines a clean separation between XPU design and the Ethernet layer, allowing each vendor to innovate independently while ensuring full interoperability through existing, standards-based Ethernet protocols. A new “stripped-down” SONiC variant is also being developed to optimize software for low-latency, Ethernet-based scale-up environments.

Velaga outlined several technical milestones supporting Ethernet’s evolution for AI workloads. Broadcom continues to double Ethernet switch bandwidth every 18–24 months, with 100 Tbps class switches now enabling flatter topologies for 128K-GPU clusters, cutting latency, optics count, and cost. He cited new advances in co-packaged optics (CPO)—now in its third generation and proven more reliable than pluggables—and announced Thor Ultra, Broadcom’s first 800 G NIC, compliant with the Ultra Ethernet Consortium (UEC) RDMA extensions. The NIC supports multiflow adaptive routing, selective retries, and open interoperability with any switch or XPU, underscoring Ethernet’s non-proprietary advantage.

- AI infrastructure growth now exceeds 26 GW, or about 15 million XPUs under deployment.

- ESUN workstream at OCP defines Ethernet-based scale-up standards for heterogeneous XPUs.

- Ethernet can achieve <400 ns switch-to-XPU latency and supports 100 Tbps switch fabric scaling.

- Broadcom has entered the third generation of co-packaged optics; reliability exceeds pluggables.

- New Thor Ultra NIC is the first fully open 800 G Ultra-Ethernet-compliant RDMA adapter.

“Ethernet is the only technology that can cover all the use cases for AI—from scale-up, to scale-out, to scale-across data centers,” Velaga concluded. “It’s the most open, efficient, and reliable networking technology out there.”

See the keynote online:

🌐 Analysis: Velaga’s remarks align with a broader industry pivot toward Ethernet-based AI fabrics. The OCP ESUN initiative positions Ethernet as a unifying transport for both intra-rack and inter-data-center AI clusters, countering proprietary interconnect approaches such as NVIDIA’s NVLink and Infiniband. Broadcom’s Thor Ultra NIC and its third-generation co-packaged optics demonstrate that Ethernet is keeping pace with AI bandwidth and latency requirements while maintaining openness and interoperability across vendors—a principle OCP has championed since its founding.

Data Center Networking for AI

Data Center Networking for AIJoin our expert series on fabrics, optics, and systems powering AI-scale data centers

We’re producing interviews, explainers, and an expert report on the technologies and architectures enabling next-gen AI clusters—across silicon, optics, switching, software, orchestration, and operations.

- 1Who: Companies building real solutions—switches, NICs/DPUs, optics (pluggables, LPO, CPO), fabrics (RoCE, UCF, UALink, ESUN), telemetry, and orchestration platforms.

- 2What: 10–12 minute video spotlights, technical deep dives, and a curated industry report distributed to decision-makers and architects shaping AI infrastructure.

- 3Why: Reach hyperscaler and enterprise teams deploying AI fabrics, 800G/1.6T optics, liquid cooling, rack-scale compute, and network architectures for AI at scale.