Oracle unveiled its next-generation Oracle Cloud Infrastructure (OCI) Zettascale10 at Oracle AI World in Las Vegas, calling it the largest AI supercomputer in the cloud. Designed for massive-scale AI workloads, OCI Zettascale10 connects hundreds of thousands of NVIDIA GPUs across multiple data centers to deliver up to 16 zettaFLOPS of peak performance. The system underpins Stargate, Oracle’s flagship supercluster with OpenAI in Abilene, Texas, and is built on Oracle’s new Acceleron RoCE networking architecture to enable ultra-low GPU-to-GPU latency and high efficiency.

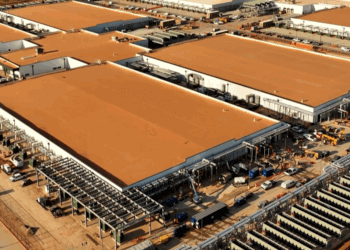

Oracle said Zettascale10 represents a tenfold increase in performance over the previous Zettascale generation introduced in 2024. Each deployment will operate within multi-gigawatt AI campuses designed for optimal density and latency, within a two-kilometer (1.2-mile) radius. The company plans to support up to 800,000 NVIDIA GPUs per cluster, enabling large-scale training and inference workloads with predictable performance and reduced energy use. The Acceleron RoCE architecture uses built-in GPU NIC switching and multiple isolated network planes to enhance scalability, reliability, and power efficiency.

According to Oracle, Acceleron RoCE networking delivers consistent GPU-to-GPU latency and faster cluster deployment through a wide, shallow fabric design. The use of Linear Pluggable Optics (LPO) and Linear Receiver Optics (LRO) also reduces cooling and power costs while sustaining 400G/800G throughput. OCI Zettascale10 will be available for customers in the second half of 2026.

“With OCI Zettascale10, we’re fusing OCI’s groundbreaking Oracle Acceleron RoCE network architecture with next-generation NVIDIA AI infrastructure to deliver multi-gigawatt AI capacity at unmatched scale,” said Mahesh Thiagarajan, Executive Vice President, Oracle Cloud Infrastructure.

🌐 Analysis: Oracle’s Zettascale10 announcement underscores the company’s ambition to compete head-on in hyperscale AI infrastructure, matching initiatives such as Microsoft’s Fairwater and Meta’s Nscale projects. The partnership with OpenAI at the Stargate site anchors Oracle’s push into multi-gigawatt AI facilities, while the Acceleron RoCE architecture positions OCI as a contender in next-gen Ethernet-based GPU networking alongside NVIDIA’s NVLink and UALink consortium efforts.

Oracle Acceleron RoCE Networking Architecture is the custom high-performance interconnect fabric that underpins Oracle Cloud Infrastructure’s new Zettascale10 AI clusters. Acceleron is based on RoCEv2, which allows GPUs and CPUs to exchange data directly over Ethernet without CPU intervention—bypassing the operating system and TCP/IP stack to reduce latency and overhead. Unlike conventional three-tier leaf-spine network designs, Acceleron leverages the switching capabilities inside the GPU network interface card (NIC). Each NIC connects simultaneously to multiple network planes, effectively turning every GPU node into a small part of the switching fabric This “wide, shallow” topology reduces the number of external switches and tiers.

Acceleron supports Linear Pluggable Optics (LPO) and Linear Receiver Optics (LRO)—simpler, lower-power optical interfaces that avoid digital retiming.

- These optics cut network and cooling power requirements while still sustaining 400G/800G bandwidth per link.

- The result is more of the facility’s multi-gigawatt power budget can be allocated to compute, not networking.

🌐 We’re tracking the latest developments in AI infrastructure. Follow our ongoing coverage at: https://convergedigest.com/category/ai-infrastructure/