At the Photonic Enabled Cloud Computing (PECC) Summit in Silicon Valley, Ram Huggahalli, Principal Hardware Engineer at Microsoft, outlined how AI infrastructure growth is driving a fundamental rethink of optical design. Speaking about Microsoft’s new AI-optimized data centers, he described an infrastructure so vast that the fiber deployed could “wrap around the Earth four and a half times.”

“These facilities represent a milestone in physical scale, compute capability, and network reach,” he said. “And yet, this is only the beginning—we haven’t even introduced optics into scale-up yet.”

From Scale-Out to Scale-Up

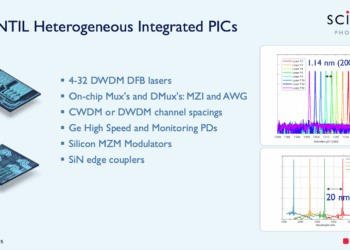

Most optical deployments today support large-scale, scale-out interconnects that connect racks, data halls, and even entire data centers. But Huggahalli emphasized that the next challenge lies in scale-up connectivity—the short-reach, high-density domain that links GPUs, CPUs, and accelerators inside AI clusters.

“Being able to scale beyond copper-limited parts is now a fundamental capability,” he said. “Workloads can feel the difference, and it’s becoming a competitive differentiator.”

He described an emerging distinction between “scale-out optics,” focused on reach and resiliency, and “scale-up optics,” which must deliver bandwidth density, low energy per bit, and extremely low bit error rates.

The Shift to AI-Scale Optics

According to Huggahalli, the industry’s mindset is shifting from trying to make optics better than pluggables to making them comprehensively better than copper.

“There’s always going to be an overhead with any active component—cost, reliability, complexity,” he said. “But the goal is to offset those overheads with superior bandwidth density, lower energy per bit, and lower BER. That’s what makes AI-scale optics distinct.”

He noted that progress toward this “top-left quadrant”—high performance with high efficiency—will likely come in stages. Current 224G linear optics and 448G SerDes designs represent stepping stones, solving immediate reach problems and enabling multi-rack clusters. But long-term, AI-scale optics must move toward even lower energy, wider interfaces, and potentially more lanes or fibers.

“The transition won’t happen overnight,” he added. “But it’s important to keep that reference in mind—we need to get comprehensively better than copper.”

Complexity Inside the AI Platform

Huggahalli also addressed the growing architectural complexity within AI accelerator platforms. Between GPUs, CPUs, and memory systems, there are now multiple layers of interconnects—some copper, some retimed, some optical.

“AI platforms today are simply too complex,” he said. “There’s so much connectivity and so many different technologies coming together. We wish we could take advantage of photonics to simplify the platform, not make it more complicated.”

He described how connections now range from direct copper links between adjacent accelerators, to backplane and retimer-based connections, to short-reach optics spanning racks. The question, he said, is how and when to converge these technologies.

“The first opportunity is to converge within the rack,” he suggested. “Once you go outside the server tray, you can apply scale-up optics. But that still leaves a lot of copper. The real question is: can we converge further? And what are the requirements that drive that convergence?”

Co-Packaged Optics and Convergence

Introducing co-packaged optics (CPO) adds another level of design complexity. Integrating multiple optical engines onto a single accelerator SoC will require standardization and careful co-design to avoid fragmentation.

“Imagine a CPO with multiple optical ingredients inside the same accelerator,” he said. “That’s the level of complexity we’re dealing with. It’s a big engineering challenge, but solving it is essential for simplifying future AI platforms.”

Toward Operational Efficiency and Standards Alignment

Huggahalli emphasized that the industry’s focus is shifting from technology invention to operational optimization. “We’ve reached a point where it’s not about more technology—it’s about how to deploy efficiently,” he said. “We have enough on the table to start thinking about optimization and operational efficiency as the next phase.”

He also highlighted the importance of cross-industry collaboration in setting common definitions for emerging optical domains. “I’m super excited that OIF has taken the step forward of bringing some of the requirements together in a white paper,” he said. The first draft of this Optical Internetworking Forum (OIF) white paper brings together the key metrics and design distinctions for AI scale-up versus scale-out optics, covering aspects such as reach, bandwidth density, energy per bit, and bit error rate (BER).

“The OIF work is an important milestone,” Huggahalli noted, “because it formalizes the language and performance baselines we need to design interoperable scale-up optics for AI platforms.”

Key Takeaways

- Microsoft’s new AI-optimized data centers mark a leap in physical and optical scale, with fiber capacity equivalent to wrapping the Earth multiple times.

- Scale-up optics—short-reach, high-density links—will be essential to go beyond copper limitations.

- The next phase of AI optics aims to be comprehensively better than copper in bandwidth density, energy per bit, and BER.

- AI accelerator platforms are growing increasingly complex, mixing copper, retimed, and optical interconnects.

- Co-packaged optics (CPO) and design convergence are critical frontiers but add new engineering challenges.

- The industry is shifting from invention to optimization—deploying existing optical technologies with greater operational efficiency.

🌐 See all our coverage of the Photonic Enabled Cloud Computing (PECC) Summit .

🌐 We’re tracking the evolution of AI-scale optical networking and platform design at

ConvergeDigest.com/category/data-centers

🌐 We’re launching the “Data Center Networking for AI” series on NextGenInfra.io and inviting companies building real solutions—silicon, optics, fabrics, switches, software, orchestration—to share their views on video and in our expert report. To get involved, send a note to jcarroll@convergedigest.com or info@nextgeninfra.io.