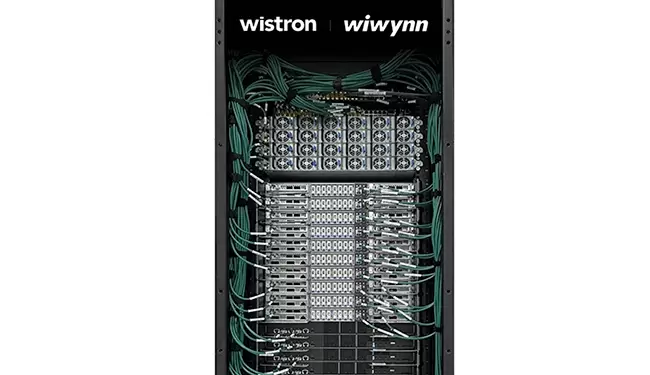

Wiwynn, in collaboration with Wistron, showcased a suite of next-generation AI server systems and thermal management technologies at Computex 2025 in Taipei. Among the highlights is the NVIDIA GB300 NVL72 rack-scale platform, which integrates 72 NVIDIA Blackwell Ultra GPUs and delivers a 50X improvement in inference performance over previous-generation Hopper systems. This system features fully direct liquid cooling (DLC) and leverages 800Gb/s NVIDIA ConnectX-8 SuperNICs, signaling a major leap forward in AI factory throughput.

Alongside the GB300 NVL72, Wiwynn also introduced NVIDIA HGX B300 systems offering up to 7X AI compute performance compared to Hopper, with 2.3TB of HBM3e memory. Wiwynn is also debuting AI servers powered by AMD Instinct™ MI350 GPUs, built on the new CDNA 4 architecture, achieving up to 35X better inference performance. These platforms incorporate AMD’s Pollara 400 NICs with 400Gbps RDMA Ethernet, underscoring Wiwynn’s multi-vendor strategy for AI acceleration.

To manage the extreme thermal demands of high-density AI compute, Wiwynn unveiled double-sided cold plates and 3.5kW ECAM-based cold plates developed with Fabric8Labs, along with a 200kW AALC Sidecar co-developed with Shinwa Controls for retrofitting legacy data centers. Also on display were in-rack CDUs from nVent and new networking gear, including NVIDIA Spectrum-X Ethernet, Broadcom’s Tomahawk 5 switch with Near-Packaged Optics, and a 102T switch platform delivering 1.6Tbps across 64 ports. Wiwynn also highlighted its NVIDIA RTX PRO 6000 servers for enterprise-grade AI applications.

- Wiwynn and Wistron showcased the NVIDIA GB300 NVL72 rack with 72 Blackwell Ultra GPUs and full DLC.

- HGX B300 and AMD Instinct MI350-based systems offer up to 7X and 35X more AI inference performance, respectively.

- Cooling innovations include ECAM cold plates, 200kW AALC Sidecar, and in-rack CDUs for high-power AI systems.

- Networking platforms feature NVIDIA Spectrum-X, Broadcom Tomahawk 5 NPO switches, and 1.6Tbps-capable 102T switches.

- Wiwynn also displayed RTX PRO 6000 servers for broad enterprise AI workload acceleration.