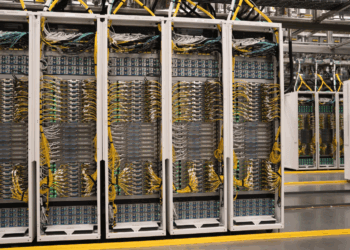

Microsoft previewed two new custom chips at its Ignite conference, expanding its in-house silicon portfolio to enhance Azure’s efficiency, security, and performance. The new additions, the Azure Boost DPU and the Azure Integrated HSM, are designed to accelerate storage workloads and bolster cryptographic security, respectively. These chips complement Microsoft’s existing silicon lineup, including the Cobalt SoC and the Maia AI Accelerator, signaling the company’s commitment to optimizing its cloud infrastructure.

The Azure Boost DPU focuses on data-centric workloads by accelerating storage, networking, and other compute-heavy operations. Microsoft claims that future Azure servers equipped with this chip will deliver 4x performance improvements and consume 3x less power for cloud storage workloads compared to current setups. While no specific availability timeline was disclosed, deployments are anticipated to begin within the next year, in line with similar past rollouts.

The Azure Integrated HSM addresses the growing demand for secure cloud environments. This hardware security module (HSM) is designed to meet FIPS 140-3 Level 3 standards, providing a robust layer of cryptographic security for tasks like encryption and key management. The chip includes specialized hardware accelerators for high-performance cryptographic operations and is slated for deployment in all new Azure servers starting next year.

• Azure Boost DPU

• Purpose: Storage and data-centric workload acceleration. Azure Boost DPU integrates hardware and software in a co-designed system with a lightweight data-flow operating system, delivering significantly improved performance, power efficiency, and platform agility over traditional architectures. It enables cloud storage workloads to operate with three times less power and four times the performance of CPUs. The DPU’s custom application layer incorporates advanced data compression, protection, and cryptography engines, enhancing security and reliability for modern cloud systems.

• Performance: 4x faster, 3x more power-efficient for cloud storage workloads.

• Timeline: Expected deployment within the next year.

• Azure Integrated HSM

• Purpose: Cryptographic security and key management.

• Features: Meets FIPS 140-3 Level 3, includes hardware cryptographic accelerators.

• Timeline: Deployment in all new Azure servers starting in 2025.

• Existing Chips

• Cobalt SoC: Arm-based, optimized for general-purpose workloads.

• Maia AI Accelerator: Supports large language model training and inference, including OpenAI systems.

“These new chips demonstrate Microsoft’s dedication to building a cloud infrastructure tailored for efficiency, security, and scalability,” the company noted during the event.

- Microsoft’s DPU silicon strategy builds on its acquisition of Fungible, a data center-focused DPU company, earlier this year. The deal brought Fungible’s expertise in data-centric computing, including high-performance storage and networking acceleration, into Microsoft’s portfolio.

In addition, Microsoft highlighted ongoing work with

- data center builds using timber instead of steel to reduce the carbon footprint

- plans to deploy over 15,000 km of hollowcore fiber over the next 24 months

- Azure ND GB200 v6 virtual machines, integrating the NVIDIA Blackwell platform with Quantum InfiniBand networking for rack-scale AI supercomputing performance, available in preview.

- In CPU-based advancements, the Azure HBv5 VMs, powered by custom AMD EPYC processors with 7 TB/s memory bandwidth, promise up to eight times faster performance for HPC workloads compared to alternatives, with previews starting in 2025.

- Azure Local, a hybrid infrastructure offering powered by Azure Arc, enabling organizations to meet latency, data processing, and compliance requirements with enhanced security and flexible configurations, including GPU-enabled servers for AI inferencing.

- Azure Arc now supports over 39,000 customers, and its role in extending Azure services across multicloud, hybrid, and edge environments. New product announcements included the general availability of Windows Server 2025, featuring advanced security and a hotpatching subscription option, and the preview of SQL Server 2025, an AI-ready database platform with Azure Arc integration for secure, scalable data management and simplified AI application development. These advancements underscore Azure’s focus on providing adaptive, AI-optimized infrastructure to empower organizations with secure, efficient, and innovative cloud solutions.