NVIDIA introduced its EGX edge computing platform for performing low-latency AI on continuous streaming data between 5G base stations or other edge locations.

NVIDIA said EGX was created to perform instantaneous, high-throughput AI where data is created – with guaranteed response times, while reducing the amount of data that must be sent to the cloud.

“Enterprises demand more powerful computing at the edge to process their oceans of raw data — streaming in from countless interactions with customers and facilities — to make rapid, AI-enhanced decisions that can drive their business,” said Bob Pette, vice president and general manager of Enterprise and Edge Computing at NVIDIA. “A scalable platform like NVIDIA EGX allows them to easily deploy systems to meet their needs on premises, in the cloud or both.”

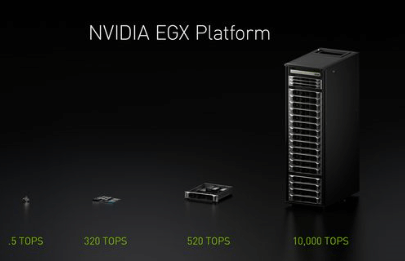

EGX begins with the tiny NVIDIA Jetson Nano, which delivers one-half trillion operations per second (TOPS) of processing in only a few watts, and scales all the way to a full rack of NVIDIA T4 servers, delivering more than 10,000 TOPS.

EGX begins with the tiny NVIDIA Jetson Nano, which delivers one-half trillion operations per second (TOPS) of processing in only a few watts, and scales all the way to a full rack of NVIDIA T4 servers, delivering more than 10,000 TOPS.

EGX servers are available from global enterprise computing providers ATOS, Cisco, Dell EMC, Fujitsu, Hewlett Packard Enterprise, Inspur and Lenovo. They are also available from major server and IoT system makers Abaco, Acer, ADLINK, Advantech, ASRock Rack, ASUS, AverMedia, Cloudian, Connect Tech, Curtiss-Wright, GIGABYTE, Leetop, MiiVii, Musashi Seimitsu, QCT, Sugon, Supermicro, Tyan, WiBase and Wiwynn.

NVIDIA EGX servers are tuned for NVIDIA Edge Stack and NGC-Ready validated for CUDA-accelerated containers.

NVIDIA EGX servers are tuned for NVIDIA Edge Stack and NGC-Ready validated for CUDA-accelerated containers.

NVIDIA has partnered with Red Hat to integrate and optimize NVIDIA Edge Stack with OpenShift. NVIDIA Edge Stack is optimized software that includes NVIDIA drivers, a CUDA Kubernetes plugin, a CUDA container runtime, CUDA-X libraries and containerized AI frameworks and applications, including TensorRT, TensorRT Inference Server and DeepStream.

NVIDIA is also promoting “On-Prem AI Cloud-in-a-Box”, which combines the full range of NVIDIA AI computing technologies with Red Hat OpenShift and NVIDIA Edge Stack together with Mellanox and Cisco security, networking and storage technologies.

“Mellanox Smart NICs and switches provide the ideal I/O connectivity for data access that scale from the edge to hyperscale data centers,” said Michael Kagan, chief technology officer at Mellanox Technologies. “The combination of high-performance, low-latency and accelerated networking provides a new infrastructure tier of computing that is critical to efficiently access and supply the data needed to fuel the next generation of advanced AI solutions on edge platforms such as NVIDIA EGX.”

“Cisco is excited to collaborate with NVIDIA to provide edge-to-core full stack solutions for our customers, leveraging Cisco’s EGX-enabled platforms with Cisco compute, fabric, storage, and management software and our leading Ethernet and IP-based networking technologies,” said Kaustubh Das, vice president of Cisco Computing Systems.